Here’s the short version: AI tools like chatbots and search assistants don’t always rely solely on what they learned during training. Sometimes they reach out and pull in the latest, relevant information from external sources before generating a response. That process - retrieving information and using it to change an answer - is what RAG describes. And it can change how you think about your content, your site structure and your online strategy.

For website owners and managers, this matters because the way people find information online is changing. AI tools are summarizing, synthesizing and responding - and the sites that get referenced in those replies aren’t there by accident. They’re structured, credible and easy for AI systems to understand and retrieve.

This glossary page walks you through what Retrieval-Augmented Generation actually means, how it works in plain terms and - most importantly - what it means for your website and the way you approach AI Optimization (AIO) and Answer Engine Optimization (AEO). No engineering background required.

Quick Answer

Retrieval-Augmented Generation (RAG) is a technique that enhances large language models by combining them with external knowledge retrieval. Instead of relying solely on training data, RAG fetches relevant documents or data from an external source at inference time, then uses that retrieved context to generate more accurate, up-to-date responses. This reduces hallucinations, improves factual accuracy, and allows models to access current information without retraining. It typically involves an embedding-based retrieval system (like a vector database) paired with a generative model.

What Retrieval-Augmented Generation Actually Means

The name sounds technical. But it breaks apart pretty cleanly, and each word in “Retrieval-Augmented Generation” is doing work. Once you see what each piece means, the whole idea clicks into place.

Start with generation - what a large language model does. It generates text in response to a question or prompt, drawing on everything it learned during training to produce an answer. But training data has a cutoff date and no direct connection to private or up-to-date information.

That’s where retrieval comes in. Before the model writes anything, a retrieval step goes and fetches relevant information from an external source - a database, a document library, a knowledge base, or similar. Kind of like the difference between a researcher who looks things up before writing versus one who writes purely from memory. The memory-only strategy works until it doesn’t, and that’s when you get confident-sounding answers that are just wrong.

Augmented is the connective tissue between the two. The retrieved information gets added to the model’s input so the generation step has something concrete to work with. The model isn’t guessing; it’s writing based on material that was just pulled in specifically for that question.

Put it all together and you get a system that retrieves relevant content, uses that content to augment what the model knows, and then generates a response grounded in that information. The model’s language abilities stay - it still writes fluently and understands context - but now it has a source to draw from instead of relying solely on what it learned during training.

This distinction matters more than it looks. A standard language model is a closed system in the sense that its knowledge is fixed at training time. A RAG system is open to external information at the moment a question gets asked; it’s a difference in how reliable and up-to-date the output can be. Search engines have been shifting in this direction too, which is part of why zero-click search has become such a growing concern for content publishers.

The intelligence is already there; RAG connects it to a live, searchable source of facts instead of leaving it to work from memory alone.

How the RAG Process Works From Query to Answer

The whole pipeline starts the second a user types a question. That question gets passed to a retrieval system which searches an external knowledge source - like a database, document library, or company knowledge base - to find the most relevant information.

The retrieval step works like a search engine running quietly in the background. Those chunks then get handed off to the language model as extra context.

The language model doesn’t generate an answer from memory alone - it reads the retrieved content alongside the original question and uses it to produce a response. That’s what separates RAG from a standard AI chat experience - the model is working with sourced material instead of relying on whatever it absorbed during training.

The quality of the final answer depends on two things working well together. The retrieval step needs to surface relevant content and the language model needs to interpret that content accurately. If either part underperforms, the answer will reflect that.

It’s helpful to see how this stacks up against the alternatives.

| Approach | How it works | Main limitation |

|---|---|---|

| Retrieval only | Finds and returns relevant documents or passages | No natural language generation - the user gets raw text, not a formed answer |

| Generation only | A language model produces an answer from its training data | Limited to what it learned during training, with no access to new or external information |

| RAG combined | Retrieves relevant content first, then generates an answer using that content | Depends on retrieval accuracy and the quality of the knowledge source |

Each strategy on its own has a gap. Retrieval without generation leaves users to do the interpretation work themselves. Generation without retrieval produces fluent answers that can be confidently wrong - a pattern explored in detail when looking at how sourced content affects credibility. RAG addresses this by connecting the two steps into one process.

The knowledge source itself is worth mentioning too - it can be updated independently of the language model, which means the system can incorporate new information without retraining the model from scratch.

Why Standard AI Models Struggle Without External Retrieval

Every AI language model has a training cutoff - a point in time where its knowledge stops. After that date, the model has no way to learn about new events, updated laws, or changes in your industry unless something feeds that information to it. The result is a model that can sound confident while giving you an answer that’s months or years out of date.

This is where hallucination can become a problem. AI hallucination happens when a model generates an answer that sounds plausible but is factually wrong - it’s not a bug in the traditional sense - the model is doing what it was trained to do, which is to predict the most likely next word. But when the right answer lives outside its training data, the model fills the gap with something it constructed instead of something it knows.

Research into RAG systems has shown that retrieval-augmented strategies can cut back on hallucinations by 70 to 90 percent compared to standard generation alone; it’s a significant difference for anyone relying on AI to produce accurate content or answer user questions.

For website managers and content teams, this creates a frustrating situation. Imagine asking an AI tool about the latest search ranking factors, recent algorithm updates, or new laws in your industry - and receiving a confident answer that reflects how things worked eighteen months ago. You might not even realize the information is wrong until it causes a problem.

Standard models also produce generic replies because they draw from large patterns across massive datasets. There’s no mechanism to pull from your documentation, your recent blog posts, or the latest data in your field. The model has the average answer - not the right one for your context.

This matters more now than it did a few years ago because users expect AI-powered tools to be accurate and current. Search experiences, AI assistants, and answer engines are being held to a higher standard. A model that gets things wrong - even confidently - erodes trust fast. Grading your content for answer engine optimization is one way to stay ahead of that standard.

The core limitation isn’t intelligence. These models are capable of refined reasoning. The limitation is that they’re working from a fixed snapshot of the world, with no way to verify or update what they know on their own; it’s the gap retrieval was built to fill.

The Connection Between RAG, AIO, and Answer Engine Optimization

When Google’s AI Mode pulls a sourced answer or Perplexity cites a webpage mid-response, that’s RAG in action.

This matters quite a bit for website owners because it changes what “ranking” even means - and whether your content gets retrieved and used as a source.

Answer Engine Optimization (AEO) and AI Overviews (AIO) are terms that have come up as ways to describe this new visibility. They’re not the same thing. But they share a common thread - both depend on AI systems that use retrieval to ground their replies in content from the web.

A RAG system looks for three things when it retrieves content. Structure matters because RAG systems parse text to extract usable information. Content that’s organized into logical sections is much easier for a retrieval system to work with than dense walls of text. Authority matters because retrieval systems are usually designed to favor content from sources that seem to be credible and well-linked. And freshness matters because stale information gets deprioritized - and that’s especially true for topics that change over time. Improving your blog’s E-A-T score directly supports how retrieval systems judge your authority.

None of this is very new. But RAG raises the stakes for each factor. A page that used to rank well might now be passed over if a fresher or better-structured source is available to retrieve.

When an AI answer engine cites a source, that citation is a form of exposure - sometimes the only exposure a user sees before they leave. That means being retrieved is helpful even if the user never clicks through to your site.

AIO content in Google’s search results works in a similar way. Google’s system identifies pages that answer the query well and pulls from them to build the AI-generated summary at the top of the page. The pages that get used tend to have direct answers, topical depth, and content that’s kept up to date.

Your content either gets pulled into an AI’s answer or it doesn’t - and the things that determine that are worth understanding in detail.

What Types of Content RAG Systems Tend to Pull From

RAG-powered tools don’t pull from the web at random. They favor content that’s structured, trustworthy, and easy to parse - the content that answers a question directly and doesn’t make the system work hard to find the point.

A well-written FAQ page with questions and direct answers is far more helpful to a RAG system than a long-form opinion piece that buries its main point in paragraph seven. The same thing goes with definition pages, how-to guides, and data-backed content like statistics or research summaries. These formats are designed to give you information fast, which is what retrieval systems need.

There’s actually a helpful parallel here with how humans experience bad search. A commonly cited stat puts employee dissatisfaction with poor internal search interfaces at 79%. People get frustrated when they can’t find what they need quickly. RAG systems have the same problem - if your content is hard to scan, it’s harder to retrieve and use in a generated response.

The table below shows common content types and how well they work with retrieval-based systems.

| Content Type | Retrieval-Friendly? | Why It Works (or Doesn’t) |

|---|---|---|

| FAQ pages | Very high | Structured question-and-answer format maps well to how queries are formed |

| Definition or glossary pages | Very high | Concise and factual, easy to extract and verify |

| How-to guides | High | Step-based structure makes information easy to isolate |

| Data and statistics pages | High | Specific figures give retrieval systems something concrete to cite |

| Long opinion articles | Low | Hard to extract clean facts from editorial content |

| Outdated pages | Very low | RAG systems prioritize fresh and accurate information |

Authority also factors in. Content from established sources - sites with a track record of accurate information - tends to get weighted more favorably than content from newer or less credible sources. Fresh content matters too. RAG tools are built to surface information that’s current and reliable instead of stale. Backdating articles won’t help here either, since retrieval systems are tuned to favor genuinely recent and verifiable content.

The pattern is steady. Clean structure, direct answers, credible sourcing, and up-to-date information are what put content in a position to be retrieved and used.

How to Make Your Website More Visible to RAG-Powered Tools

You don’t need to rebuild your site from scratch. A few structural changes can make a difference in how RAG systems read and use your content.

Start with how your pages are organized. RAG pipelines pull content in chunks, and tools like LangChain and LlamaIndex - used by about 75% of RAG developers - work best when those chunks have a beginning and end. That means descriptive headings and paragraphs focused on one idea, and not burying your main point halfway down the page.

Answer questions directly and early. If someone may think “what is X” or “how does Y work,” put the answer in the first sentence or two of that section. RAG systems are built to retrieve the most relevant passage, so the more directly you answer a question, the more likely your content gets pulled into a response.

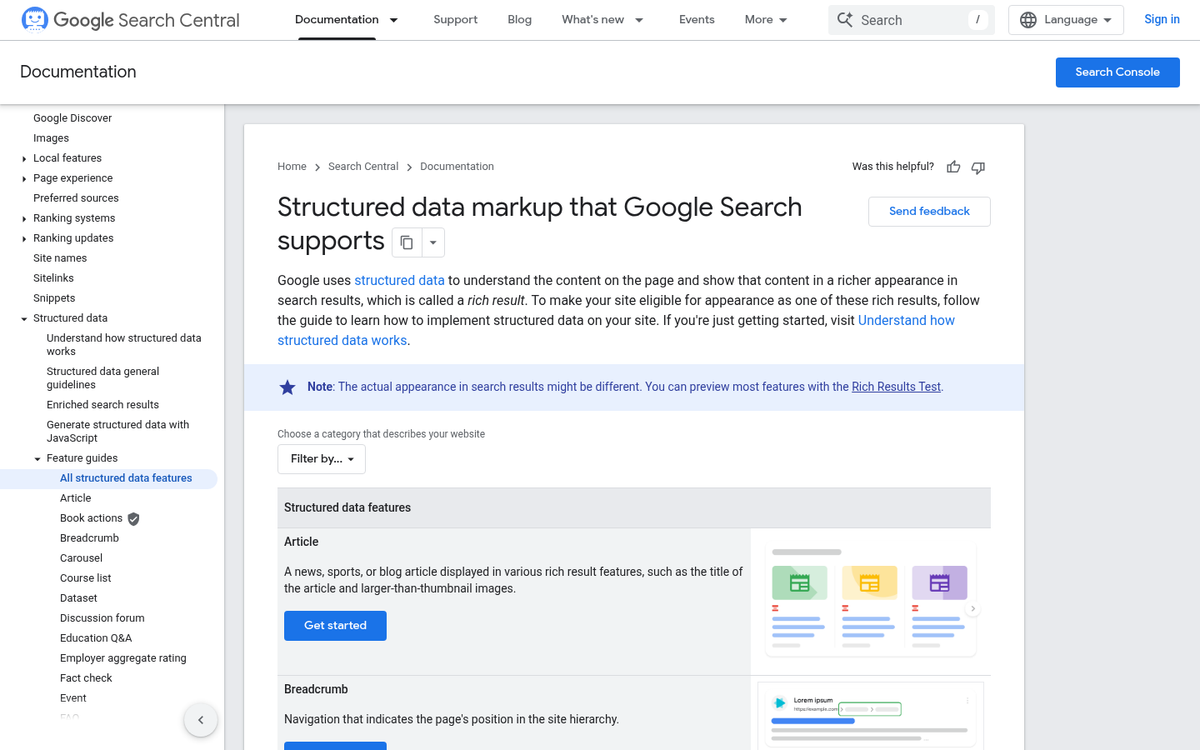

Schema markup is worth adding if you haven’t already - it gives search engines and AI tools a structured way to know what your page is about, who wrote it, and when it was updated. Pages with schema are easier for retrieval systems to categorize and trust.

Keep your content fresh. A page last updated three years ago sends a signal that the information may no longer be accurate. RAG systems favor sources that are current, so revisiting older pages and updating facts or examples helps you stay competitive.

Build topical authority over time. Instead of writing one general page about a large subject, create a set of pages that cover different angles of the same topic. Looking at how successful corporate blogs are structured can give you a useful model for organizing related content across your site.

| Action | Why It Helps RAG Systems |

|---|---|

| Use clear headings and focused paragraphs | Makes content easier to chunk and retrieve accurately |

| Answer questions directly and early | Increases the chance your passage gets selected as a source |

| Add schema markup | Helps retrieval tools understand and categorize your content |

| Update pages with older information | Signals that your content is reliable and current |

| Build out related pages on the same topic | Establishes your site as an authoritative source in that area |

FAQs

What does Retrieval-Augmented Generation (RAG) mean?

RAG is a process where AI tools retrieve relevant information from external sources before generating a response. Instead of relying solely on training data, the model pulls in current, sourced content to produce more accurate and up-to-date answers.

How does RAG reduce AI hallucinations?

RAG reduces hallucinations by grounding AI responses in retrieved, real-world content rather than memory alone. Research suggests retrieval-augmented strategies can cut hallucinations by 70 to 90 percent compared to standard generation.

What content types do RAG systems favor?

RAG systems favor structured, easy-to-parse content like FAQ pages, glossary definitions, how-to guides, and data-backed pages. Long opinion pieces or outdated pages are much less likely to be retrieved and used as sources.

How does RAG relate to Answer Engine Optimization?

AEO focuses on making your content retrievable by AI-powered answer engines, which use RAG to source their replies. Pages with clear structure, topical authority, and fresh information are more likely to be cited in AI-generated responses.

How can I make my website more visible to RAG tools?

Use descriptive headings, answer questions directly and early, add schema markup, keep content updated, and build topical authority across related pages. These steps make your content easier for retrieval systems to find, parse, and use.