A Large Language Model (LLM) is a type of artificial intelligence trained on massive amounts of text data - books, websites, articles, forums, and more - that allows it to understand, generate, and respond to human language in a surprisingly natural way. When you ask ChatGPT a question, get a summary from Google’s AI Overviews, or use an AI assistant on a website, you’re interacting with the output of an LLM at work.

LLMs are actively changing how people find information online - and whether your website gets surfaced as a trusted source when they do. Strategies like AIO (AI-Powered Optimization) and AEO (Answer Engine Optimization) exist specifically to help your content get recognized, understood, and cited by these models.

This glossary entry will explain what LLMs are and how they work in plain terms - and, most critically, what their rise means for how you structure, write, and present content on your site. No computer science degree required.

Quick Answer

A Large Language Model (LLM) is an AI system trained on massive amounts of text data using deep learning, particularly transformer architecture. It learns to predict and generate human-like text by recognizing patterns across billions of parameters. LLMs like GPT-4, Claude, and Gemini can perform tasks such as writing, translation, summarization, coding, and answering questions. They are pre-trained on broad datasets and can be fine-tuned for specific applications, making them versatile tools across industries including healthcare, education, and software development.

What a Large Language Model Actually Does

At its core, a large language model is a system trained to work with text - it reads an input - a question, a prompt, a piece of content - and produces a response that continues or completes the thought in a way that makes sense. The output feels natural because the model has learned from a giant amount of written language.

Kind of like a very advanced version of the autocomplete feature on your phone. Your phone guesses the next word based on what you have typed before.

It does this by finding patterns in language. During training, the model processes giant amounts of text and learns how words, sentences and ideas relate to each other - it does not memorise facts the way a database does. Instead, it builds a deep understanding of how language fits together, which lets it generate new text that sounds coherent and contextually appropriate.

What goes in is called a prompt - it will be a question, an instruction, some background context, or any combination of these. What comes out is generated text - and the quality of that output depends heavily on how well the input was constructed. For web content specifically, this matters quite a bit because a vague prompt tends to produce a vague response.

It is worth mentioning that LLMs don’t retrieve information the way a search engine does. They generate replies based on learned patterns, which is why they can write a product description, answer a technical question, or summarise a document without looking anything up. That generative ability is what makes them helpful for content work. This is also connected to the rise of zero-click search, where users get answers without ever visiting a website.

The model does not understand language the way a human does - it does not have opinions, intentions, or awareness. What it has is a very refined ability to produce text that fits the context it’s given. That distinction matters when you start to use these tools to create, edit, or optimise content for audiences.

Inputs and outputs are the whole game with LLMs. Shape your inputs well and the outputs become far more helpful - worth keeping in mind as you read on. Tools like the AEO Content Grader can help you evaluate how well your content is structured for this kind of environment.

How LLMs Are Trained and Why the Scale Matters

Training an LLM starts with data - giant amounts of it. Text from books, websites, code repositories, and other written sources gets fed into the model so it can learn patterns in language. The model adjusts its internal settings, called parameters, billions of times until it gets better at predicting what comes next in a sentence.

Parameters are the dials inside a model that get tuned during training. A model like DeepSeek-R1 has 671 billion of them, which sounds staggering. A high parameter count doesn’t automatically mean a model will perform better for your use case - it just means the model has more capacity to store and connect information.

To run training at this scale, you need computing power. Specialized chips run for weeks or months to process all that data and fine-tune those parameters. That’s why, until recently, only a handful of well-funded organizations could build frontier models from scratch.

That’s starting to change. The cost to train a model dropped by nearly 60% between 2020 and 2024, and that single change opened the door for far more teams to build and release their own models. By mid-2024, over 200 open-source LLMs had been released - a number that speaks to just how much the economics of this space have changed.

Open-source models are ones where the underlying code and weights are made publicly available - this lets scientists and developers build on top of them without paying licensing fees or relying on a closed API. The rise of open-source options is a big reason why LLM adoption has spread so quickly across different industries and project types.

A bigger model trained on diverse data tends to perform well across a number of tasks. But a smaller, more focused model trained on specialized data can outperform the bigger one in that area. Scale matters. But so does what the model was trained on and how its training was structured.

Compute, data quality, and architecture decisions all interact during training to shape what a model ends up being capable of. No single factor determines the outcome on its own. That’s why two models with similar parameter counts can perform very differently depending on their design and training strategy.

The Difference Between Popular LLMs on the Market Today

Not all large language models are built the same way or for the same job. Some are designed to manage general tasks like writing and summarization, and others are built to reason through hard problems step by step. The gap in performance between models can be dramatic.

On a math qualifying exam, GPT-4o scored around 13% while OpenAI’s o1 model scored 83% on the same test; it’s not a small difference - it comes down to a basic change in how o1 was trained to think through problems before arriving at an answer. The model you use actually matters quite a bit depending on what you’re trying to accomplish.

OpenAI has two products worth learning about. GPT-4o is their general-purpose model, fast and capable across a number of tasks. The o1 model takes longer to respond because it reasons through problems more carefully, which makes it much stronger for math, logic, and technical work.

DeepSeek-R1 is a model from a Chinese AI lab that drew attention for performing at a competitive level with much lower reported training costs. It’s also open-source, which means developers can download and run it - a real distinction from closed models like GPT-4o, which you can only access through an API or a product interface.

| Model Name | Creator | Key Strength | Open or Closed Source |

|---|---|---|---|

| GPT-4o | OpenAI | General-purpose tasks, speed, multimodal input | Closed |

| o1 | OpenAI | Complex reasoning, math, and logic | Closed |

| DeepSeek-R1 | DeepSeek | Reasoning tasks at lower cost | Open |

| Llama 3 | Meta | Flexible, developer-friendly, widely used | Open |

Meta’s Llama 3 is another open model that has become a favorite for developers who want to build on top of an LLM without paying per-use fees. Open models give teams more control over how the model is deployed and what data it processes.

The open versus closed distinction matters more than it may appear. Closed models are easier to access but have usage limits and costs. Open models take more technical work to set up but give you far more flexibility in how you use them.

Where LLMs Show Up in Search and AI-Powered Answers

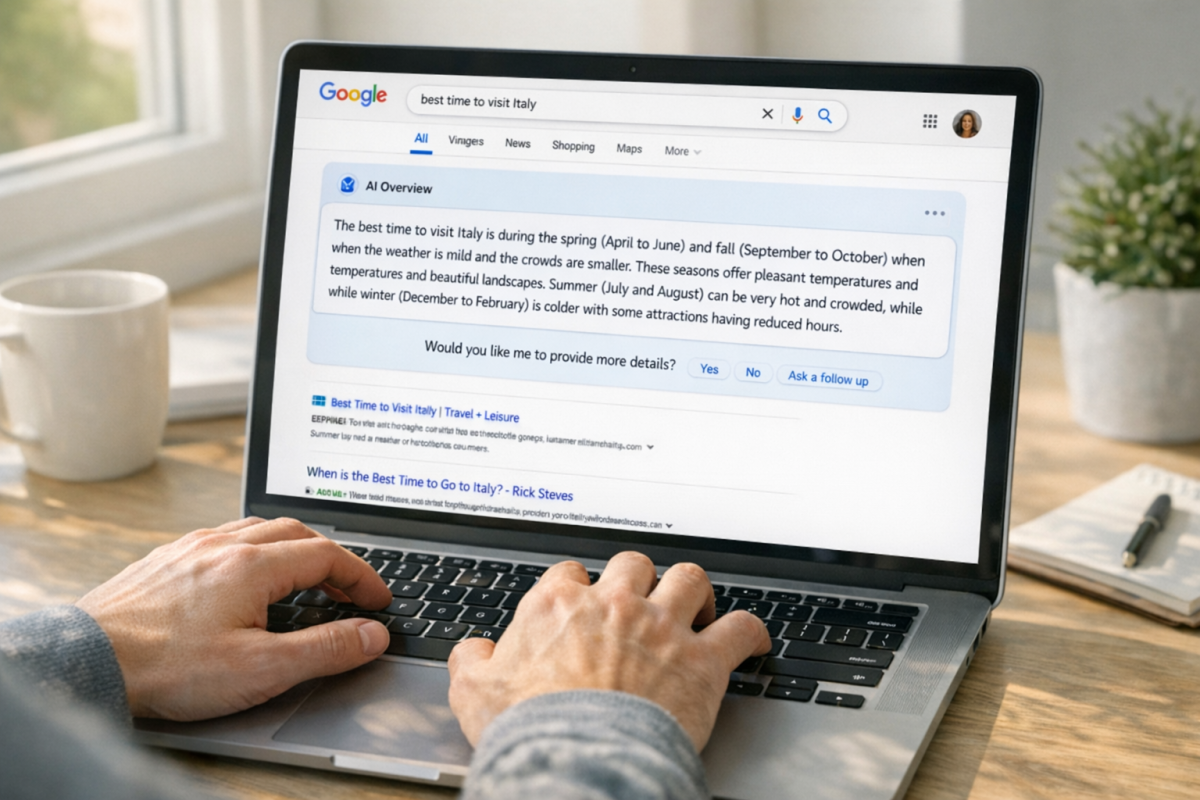

You’ve probably seen that Google search results look different than they used to. At the top of searches, there’s now a block of text that directly answers your question without showing any links; it’s Google’s AI Overviews feature, and an LLM is what generates it.

The same thing happens on tools like Perplexity, which is built entirely around giving you direct answers instead of a list of links. ChatGPT with browsing enabled works in a similar way - it can pull from live web content and wrap the answer into a conversational response. These are all examples of what are called answer engines, and LLMs are the engine underneath them.

What that means in practice is that a user can type a question and get an answer without ever clicking through to a website. The LLM reads across sources, synthesizes the information, and delivers it in plain language. That’s worth sitting with for a bit if you run a website.

Traffic from search has historically come from seeing your page in the results and choosing to click. That path is shorter now for many queries. If an AI tool answers the question directly, the user might not need to go anywhere else. Informational content - the kind built to answer questions - is the most exposed to this change. Other factors can quietly hurt your traffic too, in ways that aren’t always obvious.

That said, not all website traffic works this way. People still click through to read long-form content, to use tools, to make purchases, and to get into things that a quick AI answer can’t replace. The picture is more layered than it’s easy to summarize.

For website owners, the question of visibility is where this gets interesting. If an LLM is generating an answer, it’s pulling from content it has access to and learned from. Some websites get referenced in AI answers and some don’t; it’s not random - it has to do with how the content is written and structured. Understanding how Google reads and evaluates your content matters more than ever in this environment.

Tools like Perplexity do sometimes cite their sources, which means your website can still appear in front of a user even inside an AI-generated answer. Google AI Overviews can also link to sources within the response block. Being readable and usable by these systems is becoming part of what it means to be visible online.

What Website Owners Need to Know About LLM-Readable Content

The way you structure and write your content matters more now than it ever did. LLMs don’t browse your site the way a human does - they pull text and look for direct answers to questions. If your content is dense with tough language or buried under filler paragraphs, it’s less likely to be used as a source.

Consider how your pages answer questions. A page that states an answer near the top, uses plain language, and backs it up with detail is what these systems like to favor. Thin content - pages that talk around a topic without actually explaining it - gets passed over in favor of content that gets to the point.

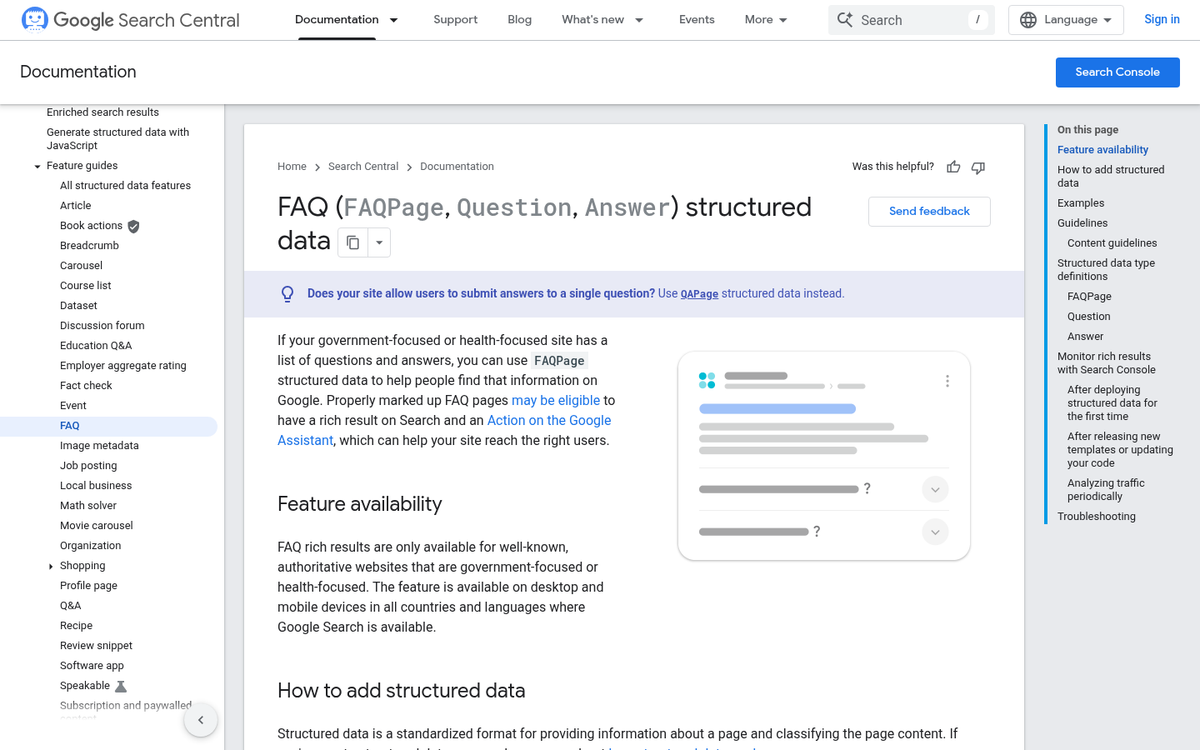

FAQ formatting is worth your attention here. When you structure content as a direct question followed by a direct answer, you make it easy for a model to extract that information cleanly. The same thing goes with schema markup - it helps search engines and AI systems understand what your content is about at a structural level.

E-E-A-T - which stands for Experience, Expertise, Authoritativeness, and Trustworthiness - is another concept to get familiar with. Google developed this framework to review content quality, and it maps closely to what LLMs seem to prioritize too. Content written by someone with knowledge, backed up by credible information, and presented in an honest way is more likely to be surfaced and cited.

One mistake website owners make is to write for search engines the old way - stuffing keywords and repeating phrases. That strategy doesn’t work well with LLMs, which are better at meaning than matching exact words. Writing like a human who actually knows their subject is more helpful.

It’s also worth knowing that this space is starting to be regulated. The EU AI Act is one of the first frameworks to govern how AI systems - like those built on LLMs - are developed and used. That suggests the laws around AI-generated and AI-assisted content will probably become more defined over time.

Schema markup, FAQ structure, and E-E-A-T are all worth looking at more as standalone topics. If you’re also thinking about how to improve how Google understands and displays your site, that’s a related area worth exploring.

Making LLMs Work for Your Website, Not Against It

If you are a website owner thinking about where to start, it helps to:

- Audit your existing content for clarity, structure, and topical authority - LLMs favor sources that answer questions directly and completely.

- Add structured data and schema markup so AI systems can parse and surface your content more easily.

- Write in a format LLMs can cite - concise definitions, clear headings, and factual statements that stand on their own.

- Build topical depth, not just breadth - covering a subject thoroughly signals expertise to both search engines and AI models.

- Stay consistent - fresh, regularly updated content signals that your site is an active and reliable source worth referencing.

The hardest part is not knowing what needs to change - it’s actually executing on it at a pace that keeps up with how fast this space is moving.

FAQs

What is a Large Language Model (LLM)?

A Large Language Model is an AI system trained on massive amounts of text data that can understand, generate, and respond to human language naturally. It powers tools like ChatGPT, Google AI Overviews, and various AI assistants.

How do LLMs differ from traditional search engines?

Unlike search engines that retrieve existing pages, LLMs generate responses based on learned language patterns. They synthesize information and deliver direct answers, meaning users often get responses without clicking through to any website.

What is the difference between open and closed LLMs?

Closed models like GPT-4o are only accessible through paid APIs or products. Open-source models like Meta’s Llama 3 or DeepSeek-R1 allow developers to download and run them freely, offering more flexibility but requiring more technical setup.

How can website owners get cited by LLMs?

Structure content with clear headings, direct answers, and FAQ formatting. Adding schema markup, writing with genuine expertise, and demonstrating E-E-A-T signals increases the likelihood AI systems will surface and cite your content.

Does LLM growth mean less website traffic?

Informational content is most at risk since AI can answer questions directly. However, long-form content, tools, and transactional pages still drive clicks, so the impact depends heavily on your content type and audience intent.