AI tools misrepresenting businesses is not a rare glitch. Business owners, marketers and brand managers are running into this constantly. AI tools like ChatGPT are increasingly becoming the first stop when researching a product, vetting a vendor, or looking up a local service. Customers ask. Job candidates ask. Journalists ask. And what they get back isn’t always accurate - sometimes it’s outdated, sometimes it’s patchy and sometimes it’s just plain wrong in ways that are hard to trace or predict.

The frustrating part is that there’s a reason this happens and it has nothing to do with your business doing something wrong. ChatGPT doesn’t browse the internet in real time - it was trained on a large snapshot of text from the web and that snapshot has a cutoff date. Whatever information existed about your company before that cutoff - accurate or not - is largely what it has to work with. Then it fills in gaps the best it can, which sometimes means pulling from unreliable sources, conflating similar businesses, or presenting outdated information as if it were current fact.

Understanding why this happens is the first step toward doing something about it - this post breaks down the mechanics behind AI misinformation about businesses, what makes some businesses more vulnerable than others and what you can do to improve how AI tools represent you.

Key Takeaways

- ChatGPT learns from a fixed web snapshot with a cutoff date, not real-time browsing, making outdated info a persistent problem.

- AI hallucination rates are significant - OpenAI’s o3 model showed a 51% hallucination rate on factual questions in internal testing.

- Businesses with sparse, inconsistent, or recently rebranded online presences are most vulnerable to AI misrepresentation.

- Damage is largely invisible - lost clients, candidates, and media opportunities that never materialize and won’t appear in analytics.

- Consistent NAP details, thorough website content, structured data, and third-party mentions help AI represent your business accurately.

How ChatGPT Actually Learns What It Knows About Your Business

ChatGPT doesn’t go out and look things up when you ask it a question - it draws from a fixed body of text that was grabbed from the internet before a cutoff date - and once that collection closed, the learning stopped.

Think of it as a very large snapshot of the web at a particular point in time. Everything ChatGPT knows came from that snapshot - news articles, forums, company websites, directories, social media posts, and anything else that was publicly available and indexed at the time.

For a known brand with years of press coverage and a strong business website, that snapshot probably captured a decent amount of accurate information. For a small or mid-sized business with a limited web footprint, the picture looks very different.

If your website was thin on detail, hadn’t been updated in a while, or wasn’t well-indexed by the time that data was grabbed, ChatGPT may have learned almost nothing about you. Worse, it may have pieced together a version of your business from incomplete sources - an old directory listing, a generic industry description, or a quick mention somewhere that doesn’t line up with what you do.

That’s where the issue starts. ChatGPT is built to generate fluent, confident replies even when the underlying information is patchy. So instead of saying “I don’t know much about this company,” it fills in the blanks - and the result can look plausible while being factually off.

Your business description, the services you sell, your location, your team size - it’s only as accurate as what the model was able to absorb during training. And if what existed online at that time was thin, inconsistent, or scattered across unreliable sources, that’s the foundation ChatGPT is working from every time someone asks about you.

The Real Numbers Behind AI Hallucinations

A New York Times investigation found that AI chatbots invent information between 3% and 27% of the time; it’s a wide range, and the gap can depend on the model and the type of question being asked.

The numbers get harder to ignore when you look at individual models. OpenAI’s own System Card for its o3 model reports a 51% hallucination rate on factual questions. That means, in testing, the model got things wrong more than half the time when answering questions about real-world facts.

Older models aren’t much better. GPT-3.5 fabricated citations at a rate of around 39.6%, and while GPT-4 brought that down to 28.6%, a separate academic study found GPT-4o still showed fabricated citations 20% of the time and introduced errors in 45% of the citations it did pull. Improvement is happening. But slowly.

| AI Model | Hallucination/Error Rate | Source |

|---|---|---|

| GPT-3.5 | ~39.6% (citation fabrication) | Published research |

| GPT-4 | ~28.6% (citation accuracy) | Published research |

| GPT-4o | 20% fabricated citations, 45% errors in real ones | Academic study |

| o3 (OpenAI) | 51% hallucination rate on factual queries | OpenAI System Card |

AI hallucinations are a documented pattern that the people who build these tools have openly acknowledged - not a rare glitch that slips through once in a while. The model doesn’t know what it doesn’t know, so it fills gaps with something that sounds plausible instead of admitting uncertainty.

For businesses, that distinction matters. When a user asks ChatGPT about your hours, your services, or your team and the model has patchy data to draw from, it doesn’t pause to flag the gap - it generates an answer anyway. Understanding how AI retrieval models decide what to cite can help explain why these errors happen so consistently.

Why Sparse or Scattered Online Presence Makes It Worse

Not every business gets the same treatment from AI. Some get described accurately enough, and others with wrong service lists, outdated locations, or facts that belong to a different business. The gap usually depends on how much steady, reliable information exists online about you.

When an AI model doesn’t have enough data to work with, it fills the gaps - it pulls from whatever is nearby - businesses in the same industry, similar-sounding names, or old listings that haven’t been touched in years. It’s not making things up out of nowhere; it’s extrapolating, which is almost worse because the result looks plausible.

Conflicting signals make this much harder to get right. If your Google Business Profile lists one set of services, your website describes something slightly different, and your LinkedIn page hasn’t been updated since a rebrand two years ago, the AI has to make a judgment call - it will pick something, and it won’t always pick correctly.

Local businesses and niche service providers tend to get hit hardest by this. A national chain with thousands of mentions across the web gives an AI model quite a bit to cross-reference. A regional accounting firm or a specialty contractor may have a handful of directory listings and a modest website - that’s it. There’s just less to anchor the model to what’s actually true about you.

Recently rebranded businesses are in an especially tough position. The old name, the old logo, and the old service descriptions are still out there on cached pages and third-party directories. The AI sees the history alongside the present and has no reliable way to know which version is latest.

The businesses most vulnerable to AI misrepresentation are usually the ones with the least control over how their information spreads - and the least visibility into where it ends up.

The Business Cost of Being Misrepresented by AI

A potential client asks ChatGPT to pull up basic facts about your business before a discovery call - it comes back with the wrong services, an old location, or a description that sounds nothing like what you do. That client may never tell you what they found - they just go quiet.

That’s where the damage happens. The harm is not embarrassing press coverage or a public complaint you can respond to - it’s silent attrition: people who were interested and then weren’t, with no trace of why. This kind of invisible drop-off is similar to why landing page bounce rates run high - the user leaves without explanation.

Job seekers do this too. Before accepting an offer from you, candidates ask AI tools to describe a company’s culture, size, and focus areas. If ChatGPT paints an outdated or inaccurate picture, a strong candidate might deprioritize you without ever visiting your careers page. You lose talent you didn’t even know you were competing for.

Journalists and researchers use AI for background research before they reach out. If your business description is vague or contradictory, you might get passed over for a feature or a quote - or worse, get included with wrong facts that reach a wider audience. Publishing a press release the right way can help establish accurate, authoritative information that AI tools are more likely to surface correctly.

Wrong operating hours have a more immediate cost. A customer who asks ChatGPT when you’re open and gets the wrong answer may show up at a closed location and not come back - it’s a lost sale tied directly to a data error you had no hand in creating.

The tough part is that none of this shows up cleanly in your analytics. There’s no “lost due to AI misinformation” column in your CRM. The harm accumulates in the background across sales conversations, hiring pipelines, and media opportunities that never materialize. Understanding which pages are actually driving sales can at least help you identify where that silent drop-off is costing you most.

What makes this worth addressing is the volume. AI tools now handle a giant number of questions about businesses every day, and the people asking them treat the answers as reliable starting points.

What You Can Do to Make Your Business Easier for AI to Get Right

The good news is that you don’t need a developer or a big budget to start fixing this. A lot of it comes down to making your business information consistent and easy to find across the web.

Start with your NAP - your business name, address, and phone number. These facts need to match across every directory, social profile, and listing you appear on. Even small differences, like “St.” versus “Street,” can create uncertainty for AI systems that pull from multiple sources to piece together who you are.

Your own website is also one of the most reliable sources AI can draw from, so it pays to be thorough. Write descriptions of what your business does, who it serves, and where it operates. Don’t assume AI will infer things that aren’t written out. The more accurate your content is, the more you give AI something to work with.

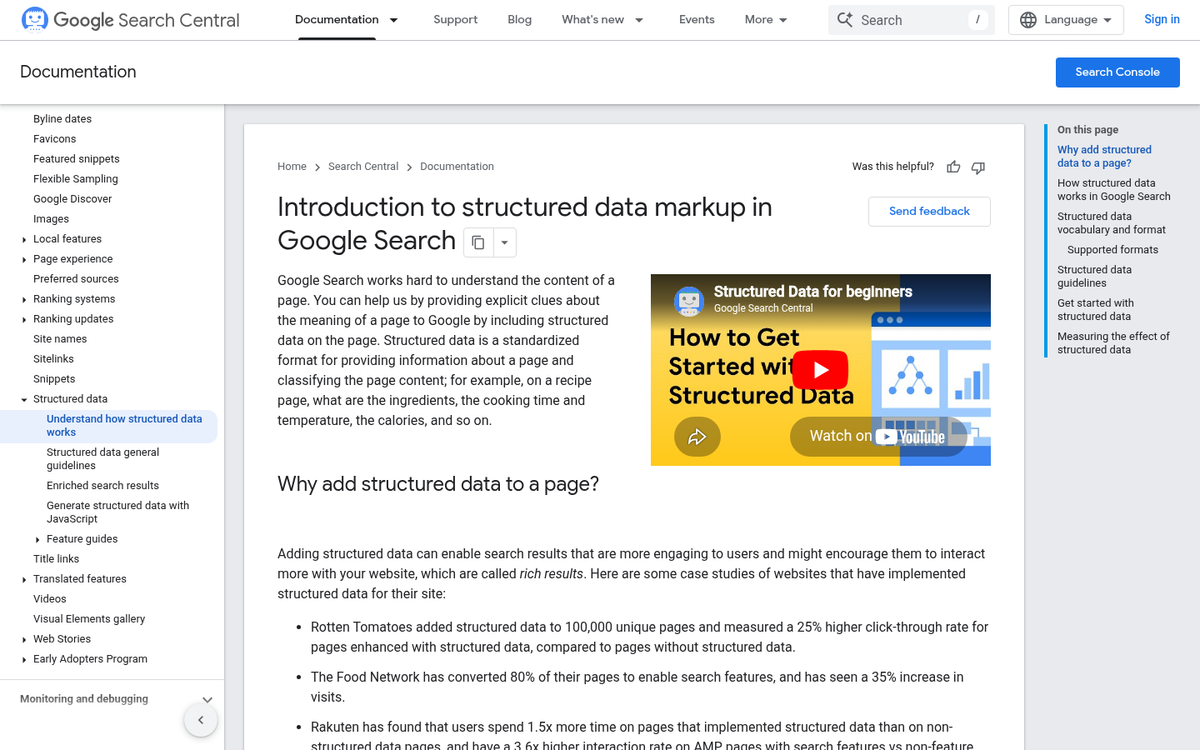

Structured data is worth a mention here as well - it’s a type of code you can add to your website that labels your information in a way search engines and AI tools understand. Many website platforms let you add it through a plugin, so it’s less technical than it sounds.

Press coverage and third-party mentions also help. When credible external sources reference your business accurately, it reinforces the information AI pulls from. A local news mention or an industry directory listing can quietly do a lot of work.

One more thing - if you find that ChatGPT is saying something wrong about your business, you can flag it directly inside the tool. There’s a thumbs down button on any response, and you can leave a note explaining what’s inaccurate. It’s a small action, but it feeds into how these systems get corrected over time.

Your Business Deserves to Be Described Correctly

The frustration of being misrepresented by AI tools is only going to matter more. AI tools are becoming a default starting point for how people research businesses, and that trend isn’t reversing. The businesses that will be described accurately in those results aren’t necessarily the biggest or most established - they’re the ones whose information is steady, current, and well-represented across the web.

You don’t need to overhaul everything at once. But if there’s a difference between who your business actually is and what the internet says about it, that gap is worth closing. The work you put into your business website now quietly shapes how AI will talk about you tomorrow - and how much trust you’ll earn from the people it reaches.