For website owners and managers, this distinction is not abstract. If your content is well-structured, factually clear, and authoritative, AI systems are more likely to pull from it directly when constructing answers. If it’s vague, inconsistent, or poorly organized, the model may skip it entirely - or worse, paraphrase it inaccurately. Content grounding is the mechanism that determines if your site can become a trusted source an AI cites, or background noise it ignores.

This matters because answer engines like Perplexity, ChatGPT with browsing, and Google’s AI Overviews are increasingly the first stop for information - not the ten blue links that follow. Optimizing for these systems means understanding how they choose what to trust. Grounding is at the core of that choice. The more your content signals reliability, specificity, and factual accuracy, the more likely it is to be anchored into an AI’s response.

What follows breaks down what content grounding means in practice, why it has become central to Answer Engine Optimization, and the concrete steps you can take to make your content the kind that AI systems are built to use.

Quick Answer

Content grounding is the practice of anchoring AI-generated responses to specific, verified source material rather than relying solely on the model's training data. It reduces hallucinations by constraining outputs to provided documents, databases, or retrieval results. This is commonly implemented through Retrieval-Augmented Generation (RAG), where relevant content is fetched and supplied as context. Grounded responses are more accurate, traceable, and trustworthy, as claims can be traced back to source documents. It is essential in enterprise applications where factual accuracy and citation are critical.

What Content Grounding Actually Does Inside an AI System

Most AI models are trained on large amounts of text and then basically frozen in time. When asked a question, the model pulls from what it learned during training - which means its knowledge has a cutoff date and no connection to what’s going on right now. Content grounding changes that by giving the model a live line to external sources.

Instead of relying only on training data, a grounded AI can reach out to things like real-time search results, structured databases, or verified data repositories at the moment a query comes in. The response gets built around that retrieved information instead of a static memory of the world. That’s why grounded answers tend to be more accurate and up-to-date than ungrounded ones.

The mechanics work roughly like this: the model generates a query, pulls relevant information from an external source, and then uses that information to shape its output - it’s a retrieve-then-respond process instead of a remember-then-respond one. That distinction matters more than it looks.

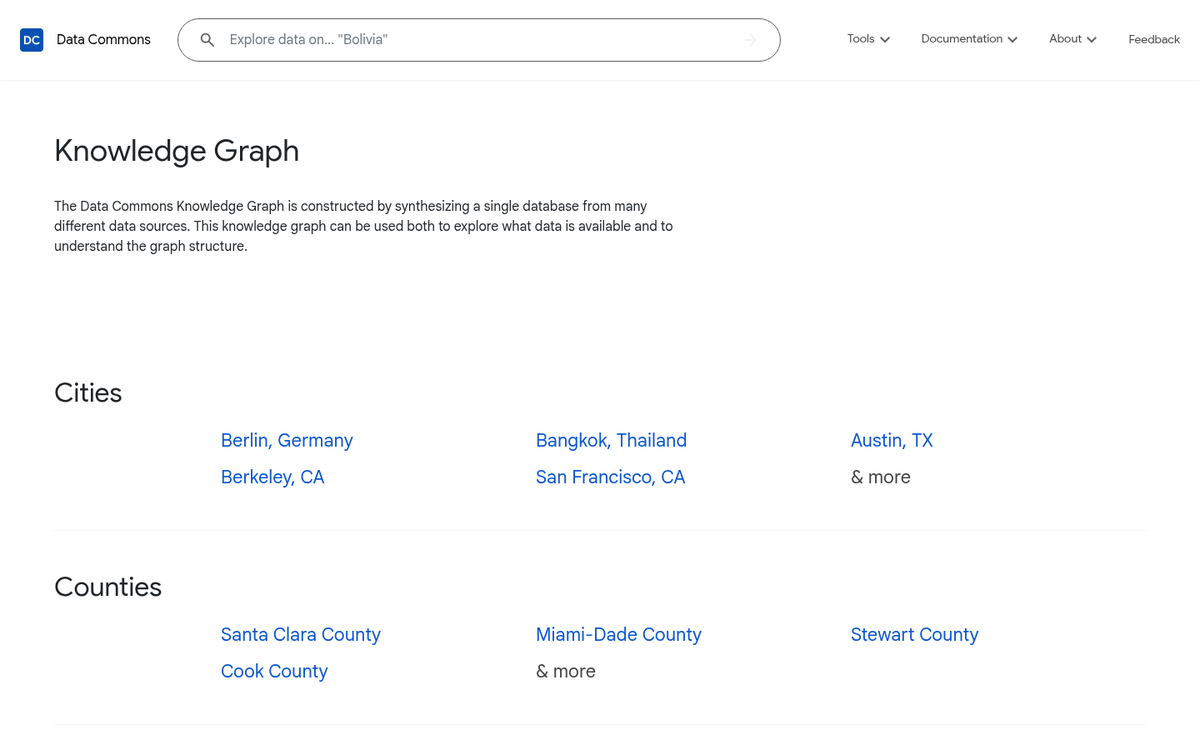

Google’s DataGemma project is a real-world example of grounding at scale - it connects language models to Google’s Data Commons, which holds around 250 billion data points covering topics like public health, economics, and demographics. Rather than guessing at statistics or approximating figures from training data, the model can pull verified numbers - it’s a meaningfully different answer.

Grounding also helps with a problem called hallucination, where a model generates plausible-sounding information that isn’t actually true. When a model has access to a concrete, external source to reference, it has something to anchor its response to. That anchor cuts back on the space for invented things to creep in. This same challenge with accuracy comes up in content creation too - for instance, articles written without proper research can seriously hurt your SEO.

Grounding is not one single technique. Some systems use retrieval-augmented generation; documents get pulled in and passed to the model as context. Others connect to structured knowledge graphs or live APIs. The underlying goal is the same across them - to give the model something to work from, instead of leaving it to reason from memory alone.

Why Ungrounded Content Gets Skipped by Answer Engines

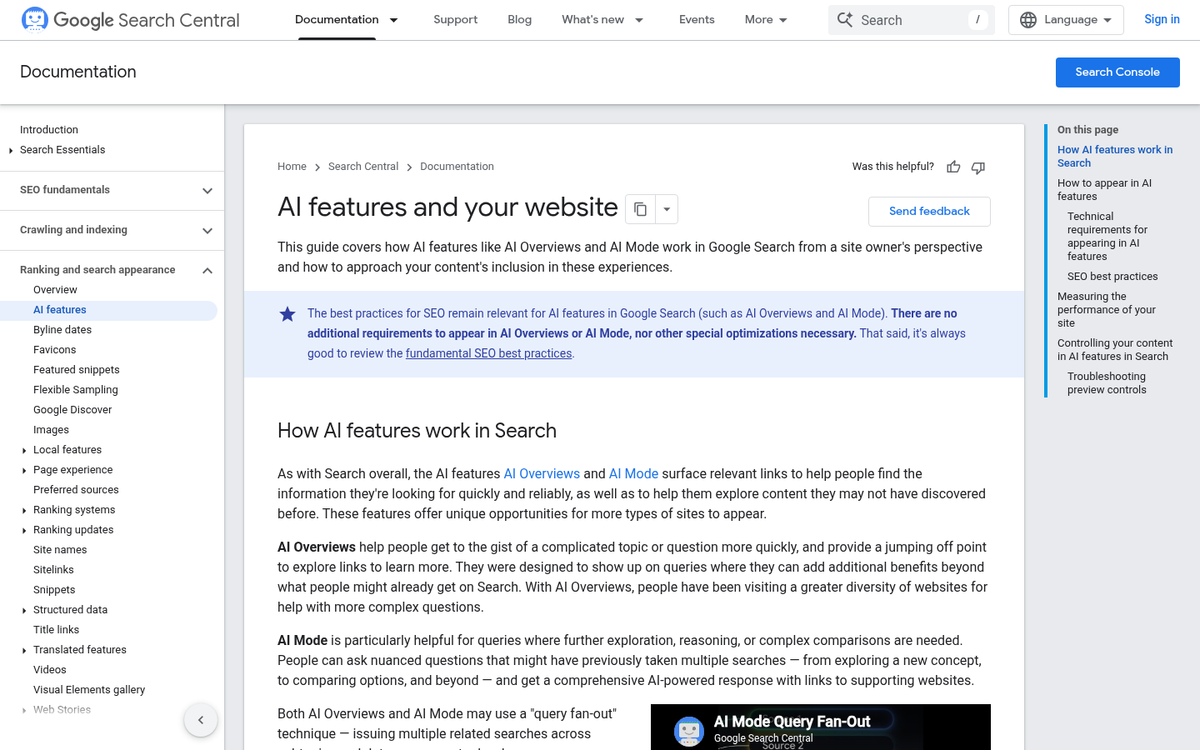

Answer engines like Google’s AI Overviews don’t read content - they review it. When a piece of content makes vague claims or skips context, the system has no way to verify what it’s reading. And content it can’t verify is content it won’t use.

This matters more than most website owners know. The filtering isn’t accidental or arbitrary. These systems are specifically built to favor content that can be cross-referenced against something - a data point, a named source, a concrete example. Vague writing doesn’t pass that test.

Consider what ungrounded content actually looks like in practice. A blog post that says “many experts agree” without naming any. A product page that calls something “the best solution available” with nothing to back that up. These phrases feel harmless to a human reader but signal unreliability to an AI system that’s trying to construct an honest answer. Using quotes in articles can actually help ground your content when attributed properly.

Google Research found that business AI systems using grounded content showed 30-50% higher accuracy compared to those relying on unverified inputs. That gap illustrates how much the presence or absence of grounding changes what an AI can confidently return as an answer.

There’s a practical reason answer engines work this way. When someone asks a question and gets an AI-generated response, that response carries implied authority. The systems generating those answers are designed to protect that authority by sourcing from content that has verifiable backing.

Ungrounded content also falls apart under specificity. A user may ask a question and a vague post might touch on the topic - but if the content never commits to a concrete position or fact, the AI has nothing to extract and attribute. It moves on to something it can use. Understanding how originality standards apply to blog content is part of writing in a way that holds up to scrutiny.

| Content Type | Example | AI Usability |

|---|---|---|

| Vague claim | “Many users find this helpful” | Low - nothing to verify |

| Grounded claim | “In a 2023 survey of 500 users, 78% reported improvement” | High - specific and attributable |

| Opinion without context | “This is the best approach” | Low - no supporting basis |

| Supported position | Industry guidelines from NIST recommend this approach | High - traceable to a source |

The table above shows how small changes in phrasing can determine whether content is usable to an AI system. Writing more isn’t the goal - writing with something to stand on is.

How to Ground Your Own Content for AI Visibility

The good news is that grounding your content is mostly about habits you can build into your writing process. Start with source citations and make them visible. When you reference a statistic or a claim, link directly to the original source instead of leaving readers to take your word for it.

Dates matter more than you know. AI systems that pull from live web content - like Gemini’s Grounding with Google Search, which connects model replies to real-time pages - will favor content that shows when it was written or last updated. A page with no date is harder to trust as the latest, so add a visible publish date and refresh the content when the underlying facts change. If you’re wondering whether adjusting dates could affect how your content is perceived, it’s worth reading about whether backdating an article looks dishonest to Google.

This is especially worth the effort for content that sits close to an AI model’s training cutoff date. Information that was accurate in early 2024 may already be stale, and a model pulling live results will rank a fresher, well-attributed page above one that reads as outdated.

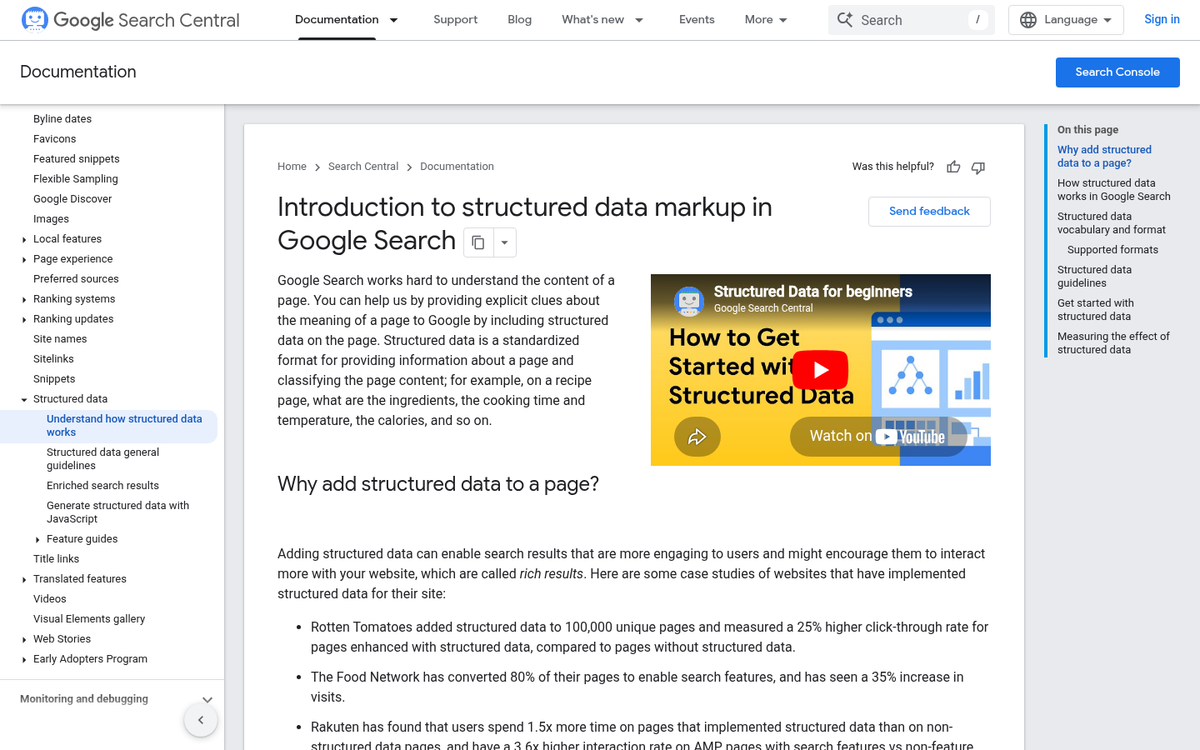

Structured markup is the other lever to pull. Applying schema to your pages gives AI systems a way to read your content with confidence instead of having to interpret it. Think of it as labeling your information so nothing gets lost in translation. The next section goes deeper on this. But even basic post or FAQ schema puts you ahead of pages with no markup at all.

Here is a quick look at how grounded and ungrounded content can vary at the signal level.

| Content Signal | Grounded | Ungrounded |

|---|---|---|

| Source citations | Present and linked | Missing or vague |

| Data recency | Current and dated | Outdated or undated |

| Structured markup | Schema applied | No structured data |

None of these steps demand a full site overhaul. You can start small by updating your most-visited pages to include a date, a linked source for any data you cite, and a basic schema tag.

Pages that get surfaced in AI answers tend to be the ones that make verification easy. When your content cites its sources and signals its own freshness, it gives answer engines something concrete to work with. Tools that help you see how your blog posts are being shared and engaged with can also point you toward which pages deserve that grounding treatment first.

Structured Data and Knowledge Graphs as Grounding Signals

Schema markup is one of the most direct ways to connect your content to something an AI system can verify.

AI systems read your words and cross-reference claims against knowledge bases and entity graphs to assess how credible a source is. If your content mentions a person, organization, or concept that appears in a recognized knowledge graph, that connection can add weight to what you’ve written.

Systems like Google’s Data Commons work as grounding layers for this reason. They hold structured, verifiable information about real-world entities, and AI models can use that foundation to validate or challenge content they see elsewhere. The more your content connects to this infrastructure, the more reliable it looks to an AI.

A question worth sitting with: does your content exist as a named, verifiable thing in any knowledge graph? Most site owners have never asked this. A local business, an author, a research institution - these can all be represented as entities in places like Wikidata or Google’s Knowledge Graph, and that representation matters more than most people know.

Even a small website can make these connections. You don’t need to be a big brand to add schema markup that links your business to a recognized entity, or to get a Wikidata entry created for a real-world subject your site covers in depth. These are helpful steps that put your content into a web of verifiable relationships instead of leaving it to stand alone. If you’re running a Squarespace blog or a self-hosted WordPress site, these technical additions are worth prioritizing early.

Entity relationships matter too. AI systems use these relationships to build context around content, which makes it easier to trust and use as a grounding source.

Structured data is one of the few areas where technical investment and content strategy overlap. Having your entities recognized and your relationships mapped out is the work that pays off quietly but consistently across how AI models interpret your site. It’s also worth combining this with broader efforts to promote your blog so that recognized entities have more chances to appear in verifiable contexts across the web.

Make Your Content Something an AI Can Stand Behind

A few focused actions go a long way toward making your content more citable starting today:

- Audit your highest-traffic pages for unsupported claims and add citation links where facts appear

- Replace vague qualifiers like “many experts say” with named sources and verifiable references

- Check that statistics, dates, and figures include the original source, not just the number

- Review any evergreen content that may be citing outdated studies or broken links

Grounding is a reflection of how seriously you take your own content - not a technical box to check. When you support a claim, you are signaling confidence in what you publish. That signal matters to readers, and it matters to the AI systems increasingly responsible for picking what gets found. The content that earns trust is the content that shows its work - and there’s no better time to start than now.

FAQs

What is content grounding in AI systems?

Content grounding is the mechanism that connects AI models to external, verifiable sources instead of relying solely on training data. It allows AI systems to retrieve real-time, accurate information when constructing answers, reducing hallucinations and improving response reliability.

Why does ungrounded content get skipped by answer engines?

Answer engines skip ungrounded content because they cannot verify vague or unsupported claims. AI systems are built to favor content that can be cross-referenced against concrete data, named sources, or specific examples. Content without these signals is simply passed over.

How can I ground my content for better AI visibility?

Add visible source citations, include publish and update dates, and apply structured schema markup to your pages. Replacing vague qualifiers with named sources and specific data points signals reliability to AI systems and increases the likelihood your content gets cited.

How does structured data help with content grounding?

Schema markup and knowledge graph connections give AI systems a verified framework to interpret your content. Linking your entities to recognized sources like Wikidata or Google's Knowledge Graph places your content within a web of verifiable relationships, making it more trustworthy to AI models.

Does content grounding help reduce AI hallucinations?

Yes. When an AI model has a concrete external source to reference, it has an anchor for its response. That anchor significantly reduces the space for invented or inaccurate information to appear in AI-generated answers.