It's less common than it used to be, but now and then when you're shopping around for an SEO service to manage your website, you might see something along the lines of "submits you to 100s of search engines!" as a selling point. You might think this is a great deal. After all, everyone only ever talks about Google, but if there are hundreds of search engines out there, you can bring in a lot of traffic from places other people don't know about.

There are a few problems with this plan.

Key Takeaways

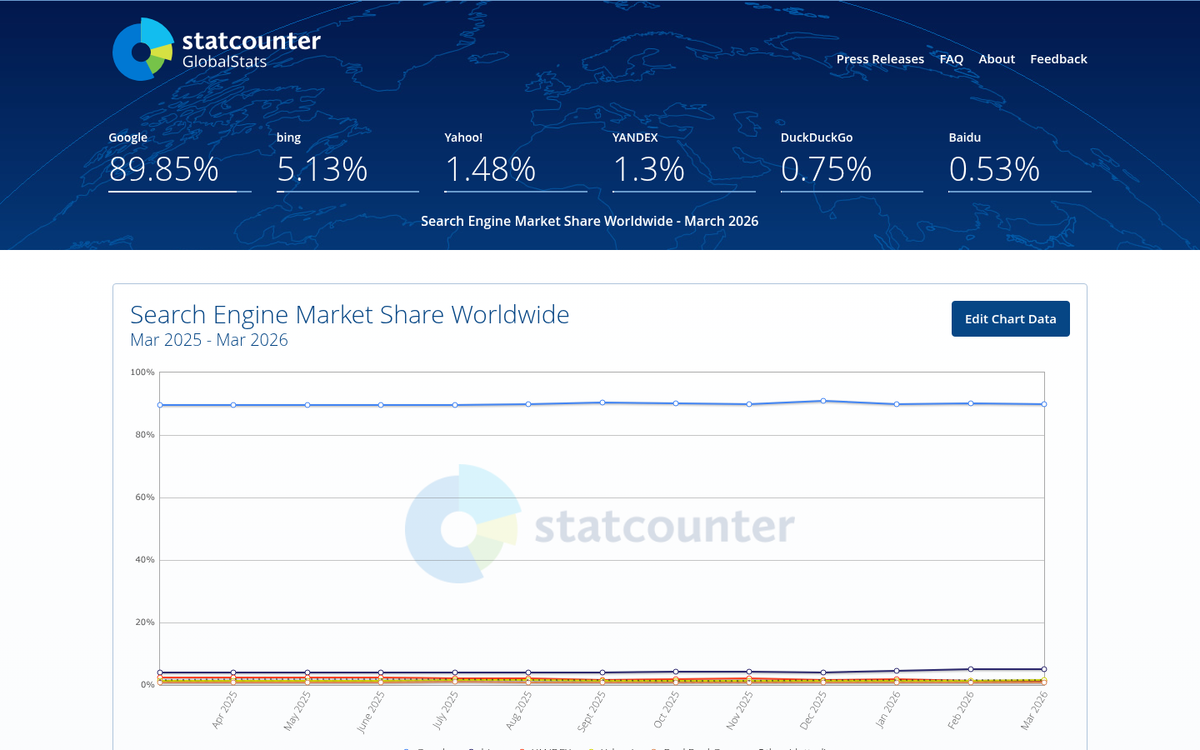

- Google holds over 90% of global search market share, making submissions to hundreds of other search engines essentially worthless.

- Google's automated bots constantly crawl and index the web, making manual site submissions largely unnecessary and obsolete.

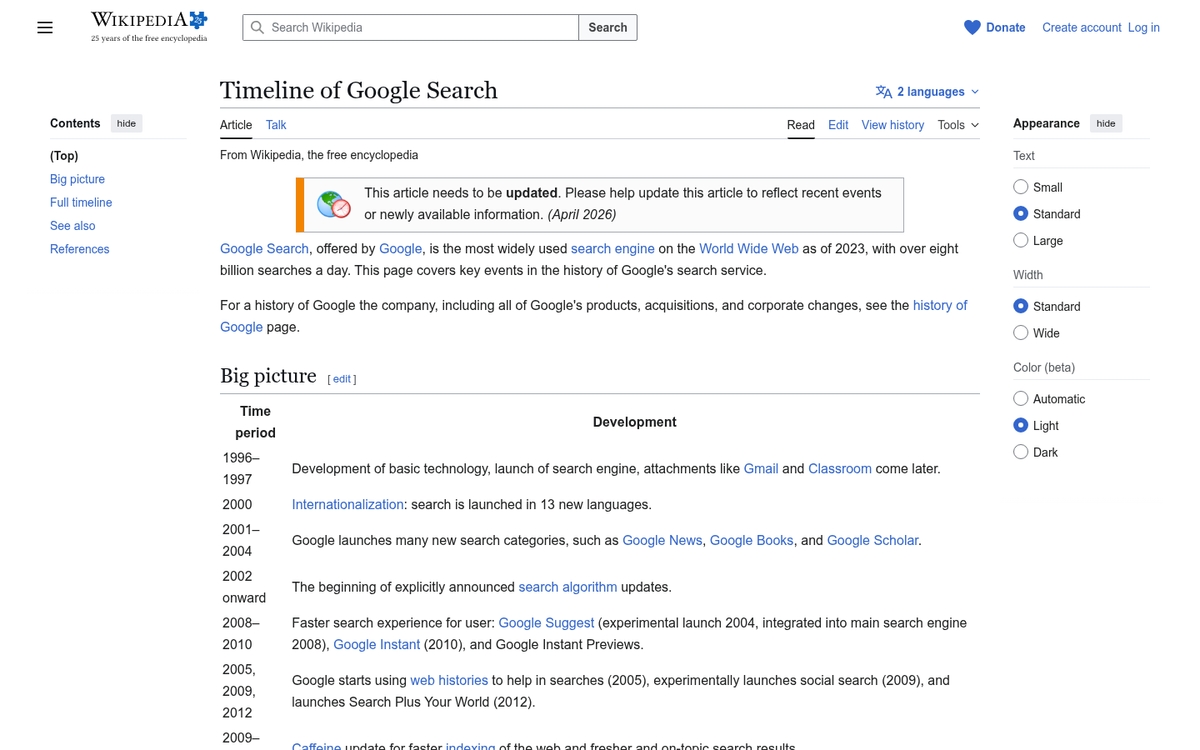

- Search engine submission was legitimate in the 1990s but became redundant as Google's crawling technology advanced significantly.

- Legitimate alternatives include earning inbound links and submitting XML sitemaps via Google Search Console for faster indexing.

- 90.63% of pages get zero organic traffic due to poor content and authority, not lack of submission services.

Problem 1: Who Uses Them?

"Them," in this case, being the search engines in question. If you were to stop 100 people on the street and ask them what search engine they use, over 90 of them would answer "Google." That's not a typo. Google now holds over 90% of the global search engine market share and handles over 60% of all U.S. search queries. That leaves fewer than 10 people, and of those, most would say Bing. A couple might say Yahoo. One might say DuckDuckGo, because they care about privacy. The last one has no idea what a search engine is and asks you if the puppy in their mind can lead you to buried treasure. If you conduct this poll, don't get into their van. Just a recommendation.

So when a company tells you they're going to submit to 100s or 1,000s of search engines on your behalf, you have a question to ask. That question is: who is actually using those search engines? Sure, you might be ranked #1 on WeirdTertiarySearch.com, but if your search volume through that engine is one person per year, what good is it doing you?

Problem 2: Who Cares About Submissions?

When was the last time you heard someone talking about submitting their site to Google for review? Okay, bad question. If you're thinking seriously about search engine submissions, you've probably been convinced they matter. They don't.

Google is the standard by which all other search engines are judged. Google has the most sophisticated algorithm, the most up-to-date search results, and the best index. They maintain this through a vast network of bots, web spiders, crawlers, and other synonymous pieces of technology. These bots are hard at work, 24/7/365, crawling the web and indexing everything. They monitor sites for changes and index those changes. They monitor sites for new links and crawl those links to discover new sites, and they index those sites. In fact, Google's John Mueller has specifically warned that using submission software "can lead to a tremendous number of unnatural links for your site," which can actually hurt your rankings rather than help them. In fact...

How Google Finds New Websites

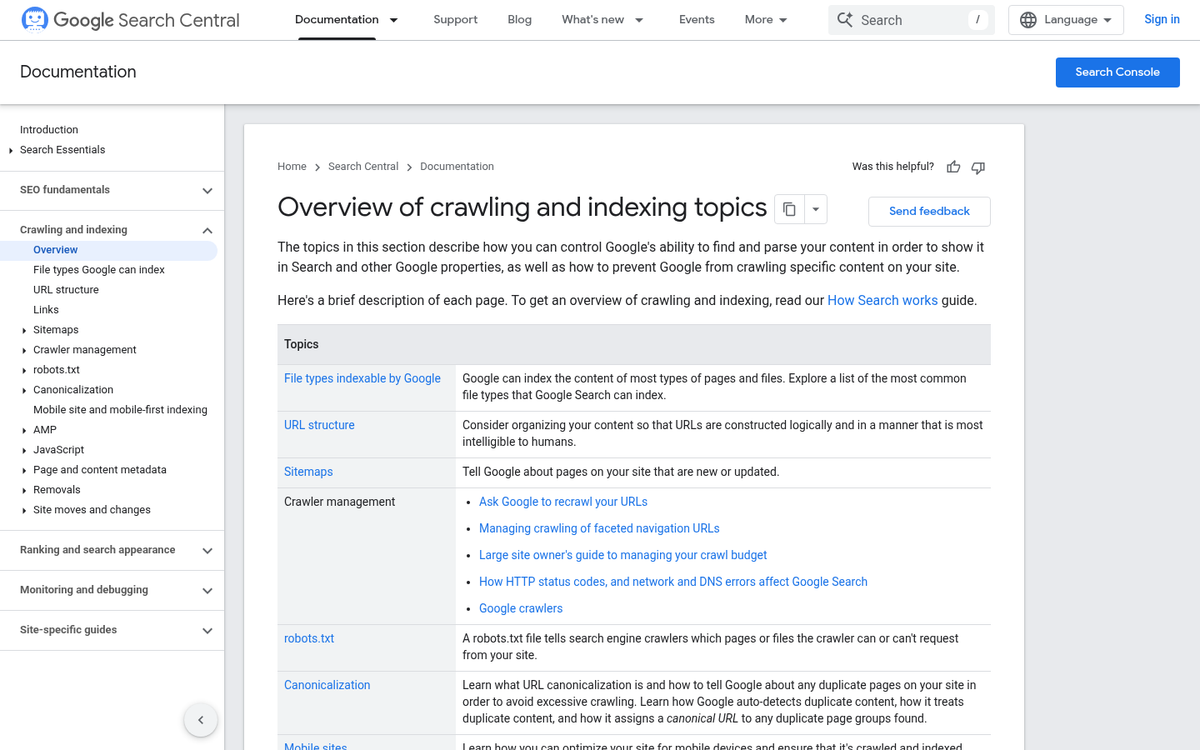

There are three phases to the search operation: crawling, indexing, and serving.

Crawling is the most basic step. Web crawlers trawl the Internet, looking for one thing and one thing only: websites that aren't already in Google's index. Whenever Google's bots look at a website, they look specifically for links. They analyze those links, looking for a few things.

- Is there record of this link in the index?

- If so, has the destination page changed since the last time it was viewed?

- If yes, re-index. If no, update the crawl date and move on.

- If the link does not exist in the index, check for robots directives.

- If the link is marked nofollow, follow the link but do not record PageRank passing.

- If the link is marked noindex, follow the link but do not index the destination page.

- If neither, follow the link and record the contents of the destination page in the index.

Essentially, Google has a legion of robots searching the Internet for pages it hasn't seen before. When one is encountered, it is added to the index - the vast sum of all Internet pages discovered by the ubermind that is Google.

Indexing is the process of Google recording the contents of the site, assigning it a rank, and placing it in the search results. Depending on the quality, age, and a hundred other factors, this initial ranking can be low or high.

Serving is the process whereby Google displays results when you make a search. Whether a page is served for a given query depends on how relevant the site is to that query. This judgment is made through a combination of algorithmic qualitative decisions and, in some cases, outsourced human assessment through Google's Quality Rater program.

You'll note that nowhere in this process does Google require you to manually submit your site. So where did this idea come from?

Search Submissions, Then and Now

Prior to September 1993, the World Wide Web was indexed entirely by hand. In the 1990s and stretching into the early 2000s, Google was nowhere near as sophisticated as it is today. Back then, it indexed pages in the hundreds of thousands, not the billions it does today. Back then, it didn't have programs that could crawl and index the web at scale. Instead, it relied on people to submit their sites. That's right - over 30 years ago, search engine submission may have actually been a viable strategy.

Since then, the bots have taken over, making the idea of search submission largely obsolete. By the time you've submitted your site for consideration, Google has very likely already crawled and indexed it through other means.

That said, there are still two legitimate ways to help Google find your content faster.

Option 1: Earning links to your site. Web crawlers follow links, so if you want to be found, you need people to link to you. Any indexed site linking to yours is effectively vouching for you and helping Google discover you.

Option 2: XML sitemap submission via Google Search Console. Google loves the XML sitemap. A sitemap is a single file that includes links to every page on your site. You can generate them automatically and keep them up to date whenever you publish new content or update a page. Google can look at this file, instantly see any new pages or changes you've made, and index them accordingly. This is the right way to do it in 2026.

Google's Manual Submission

So why, if there's little need for it, does Google still offer a URL inspection tool in Search Console? The answer is convenience and reassurance. If, for some reason, you publish a page and cannot generate a sitemap, and you can't get links from other sites, using the URL inspection tool in Google Search Console can nudge the crawlers in the right direction.

But here's the bottom line: according to Ahrefs research, 90.63% of web pages receive zero organic traffic from Google, and 5.29% receive fewer than 10 visits per month. That failure isn't caused by a lack of submission - it's caused by a lack of quality content, authority, and relevance. No submission service fixes that.

Whenever an SEO company tells you they'll submit you to hundreds or thousands of search engines, it's a red flag at best and a scam at worst. The only engines with any meaningful search volume are Google and Bing, and neither of them needs your help finding your site - they just need your site to be worth finding.