The framework E-E-A-T (short for Experience, Expertise, Authoritativeness, and Trustworthiness) sits at the core of how Google’s quality raters review content, and it raises a legitimately tough question for anyone relying on AI tools right now. The “Experience” component alone poses a direct challenge to anything that’s never lived through the thing it’s describing.

The honest answer isn’t an easy yes or no, and that’s why this conversation keeps going in circles.

Plenty of people incorrectly assume AI-written or AI-assisted content automatically fails E-E-A-T. Others believe that clean writing and accurate information are enough to pass. Both camps are working from incomplete pictures.

What this post does is cut through the assumptions and look at what the evidence actually shows - how Google has positioned itself on AI content; where AI-generated material tends to fall short against E-E-A-T signals, and where it can legitimately hold its own when handled well. The goal isn’t to sell you on AI or warn you away from it - it’s to give you a clearer, more grounded view of where things actually stand.

Key Takeaways

- E-E-A-T is a qualitative framework used by human raters, not a direct ranking factor, making AI content’s impact nuanced.

- Pure AI content with no human input ranked noticeably lower and attracted far fewer backlinks than human-written content.

- Google’s 2025 guidelines explicitly rate pages that are “almost all AI-generated” at the lowest possible quality level.

- AI-assisted content edited by humans performed within 4% of fully human-written content in quality assessments.

- Meaningful human edits-adding citations, first-hand experience, and verified facts-are what actually satisfy E-E-A-T standards.

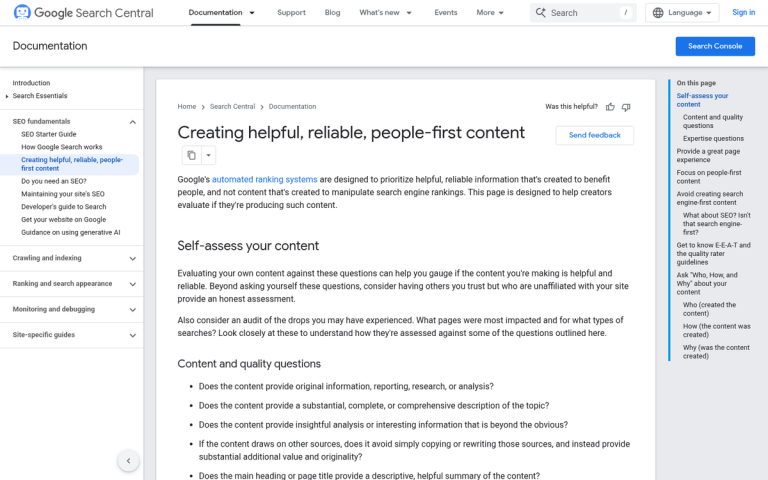

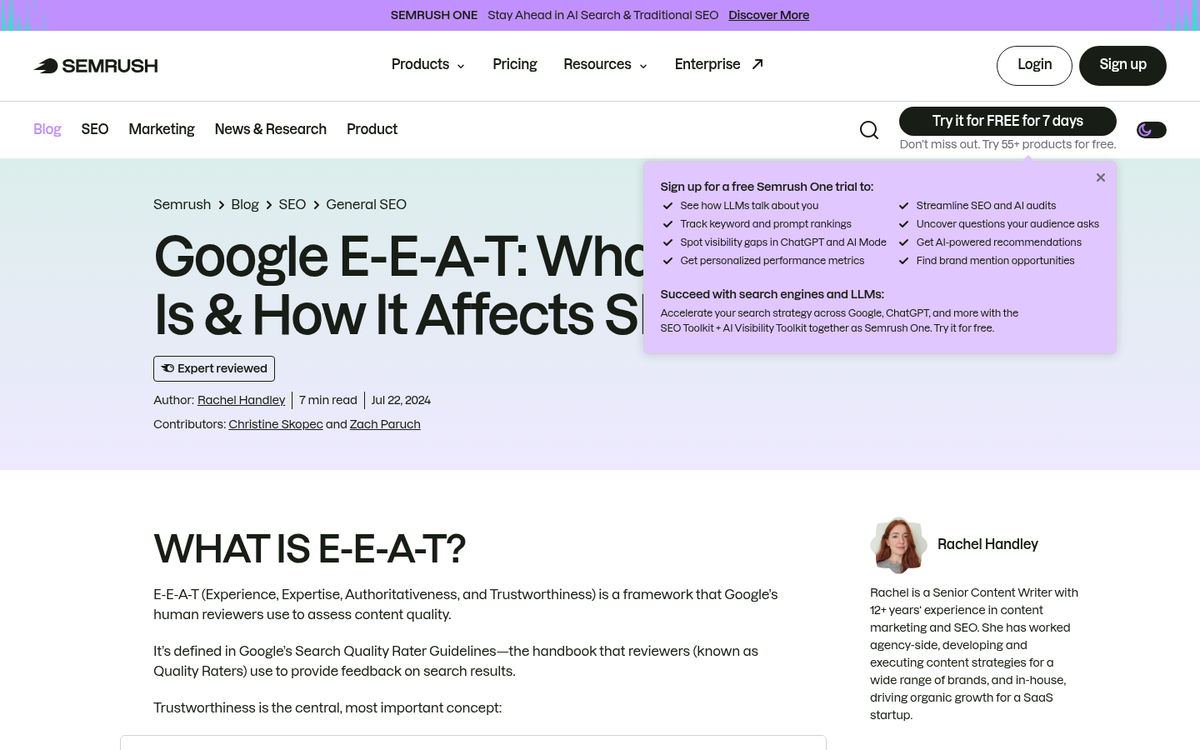

What E-E-A-T Actually Measures (And What It Doesn’t)

E-E-A-T stands for Experience, Expertise, Authoritativeness, and Trustworthiness, and each one evaluates something slightly different about a piece of content and the person or brand behind it.

- Experience asks if the creator has first-hand knowledge of the topic.

- Expertise looks at if they have the skills or credentials to speak on it.

- Authoritativeness is about reputation - how others in the space regard the source.

- Trustworthiness is the broadest signal of all, and Google treats it as the most important of the four. A site can have expertise without being honest if it has a history of misleading users.

Google does not have a direct E-E-A-T score that feeds into rankings. These are qualitative guidelines used by human Search Quality Raters to review pages and help Google understand what quality content looks like. The algorithm then uses its own signals - like backlinks, author history, and site reputation - to approximate those same properties at scale.

E-E-A-T is more of a framework than a ranking factor. That distinction matters quite a bit when start thinking about AI content. If you want to strengthen these signals on your own site, there are practical ways to improve your E-A-T score worth considering.

Search Quality Raters follow guidelines to rate pages, and their ratings shape how Google trains and refines its systems over time. They are not looking over your individual pages or pushing them up and down in search results. They provide feedback that helps the algorithm get better at recognizing honest, expert content.

What the algorithm actually measures is a set of proxy signals. These include things like who wrote the content, if that author has a credible presence elsewhere on the web, how authoritative sites link to the page, and how the site behaves. None of these signals say “this content was written by a human” or “this content was written by AI.”

That gap - between what the guidelines describe and what the algorithm can detect - is why the debate around AI content and E-E-A-T gets complicated. The guidelines were written with human creators in mind. The algorithm has to work with signals that don’t always map neatly onto that original intent.

What the Data Says About AI Content and Search Rankings

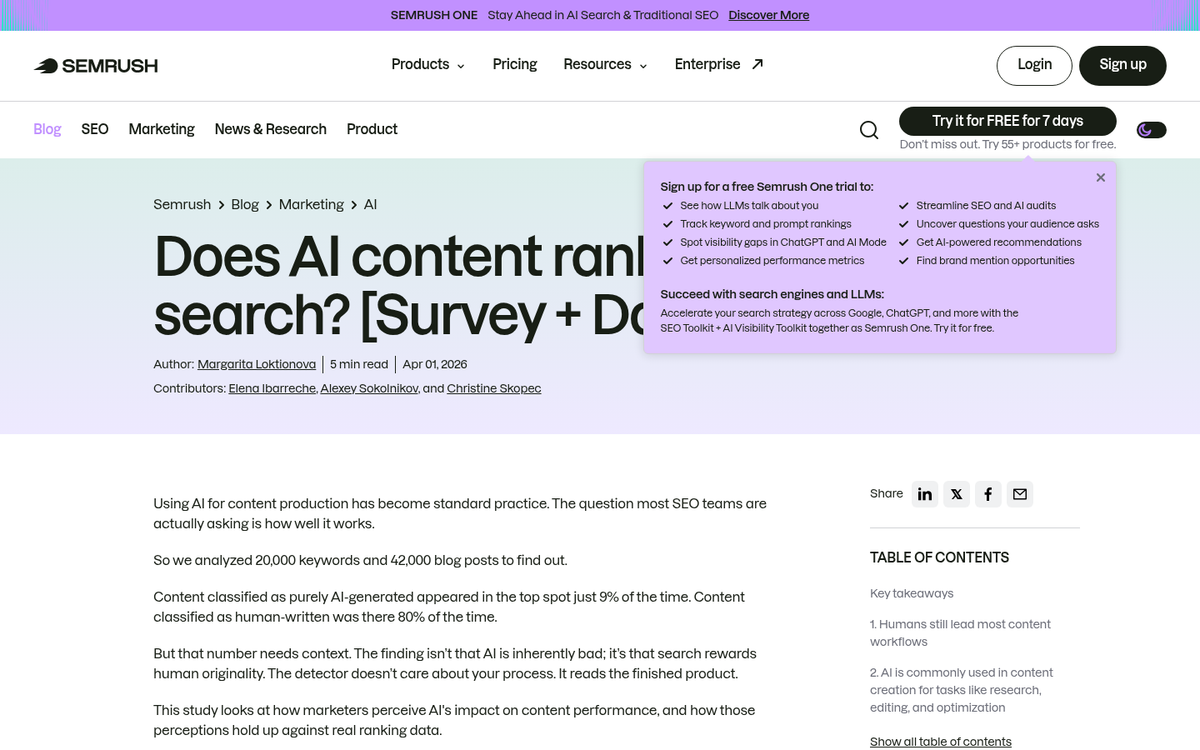

Several large-scale studies have looked at how AI-generated content actually performs in search, and the results are more nuanced than an easy pass or fail.

An Ahrefs analysis of 900,000 pages found that AI-assisted content - where a human writer used AI as part of their process - performed close to human-written content in rankings. The gap was small enough to be almost unremarkable. But pure AI content, with no actual human input, told a different story.

That same pattern showed up in a 16-month study tracking 4,200 articles. Pure AI content ranked noticeably lower on average and attracted far fewer backlinks over time. Backlinks matter here because they reflect how much others found the content worth referencing - which is an indirect measure of trustworthiness and practicality.

A Semrush sample of 20,000 URLs added more texture to the picture. AI-assisted pages held their rankings reasonably well. But pages with no human editorial layer tended to see more volatility after algorithm updates. That volatility is worth mentioning.

The data points to something steady across all three studies: the presence of a human in the process makes a measurable difference. The question is not whether AI was used at all, but how much human judgment shaped the final result.

| Content Type | Ranking Performance | Backlink Acquisition |

|---|---|---|

| Fully human-written | Strong and stable | High |

| AI-assisted (human-edited) | Close to human-written | Moderate to high |

| Pure AI (no human input) | Noticeably lower | Low |

None of that means AI content is automatically penalized. Google has said it evaluates content on quality - not production strategy. But the ranking data suggests that quality without human oversight is hard to sustain at scale.

The studies don’t prove that AI content fails - they show where it tends to fall short and why that shortfall lines up with what E-E-A-T rewards. If you’re sourcing articles through platforms like Textbroker, it’s worth reading about why low-quality content from services like that can hurt your SEO in ways that mirror these same patterns.

Why Google Draws the Line at “Almost All AI” Content

Google’s 2025 Search Quality Rater Guidelines are pretty direct on this point. Pages where almost all the content is AI-generated should receive the lowest possible quality rating. That is not a soft warning - it’s a firm position built into how Google trains its human raters to review pages.

The March 2024 spam update pushed this more in a helpful direction. Google used it to target sites that had flooded the web with scaled, low-effort AI content and were ranking for it. Thousands of pages were hit - like some from well-established domains that had leaned too heavily on AI output without enough human involvement.

A lot of that content was technically accurate - it wasn’t wrong - it just wasn’t enough in the way Google’s quality framework actually measures.

E-E-A-T stands for Experience, Expertise, Authoritativeness and Trustworthiness. The first letter - Experience - is the one that volume-generated AI content usually fails on. An AI tool can pull together facts. But it hasn’t used the product, treated the patient, filed the taxes, or navigated the situation the post is about. That lived context is what E-E-A-T was built to reward.

Editorial judgment is another gap. A human writer decides what to include, what to leave out and what angle serves the reader. AI content generated at scale tends to cover everything and follow nothing because no one made those calls along the way. This is part of why training a real person to write for your blog still holds lasting value.

Genuine authorship matters too. Google’s guidelines put weight on who is responsible for a piece of content. When there’s no named author, no demonstrated expertise and no sense that a person stood behind the work, that’s a signal that something is missing - even if every sentence reads as plausible.

This is why the volume play doesn’t hold up. Publishing hundreds of AI-generated pages might feel like a growth strategy. But Google’s quality raters are specifically trained to flag pages that look like no human shaped them.

Accuracy alone doesn’t satisfy E-E-A-T and it was never meant to.

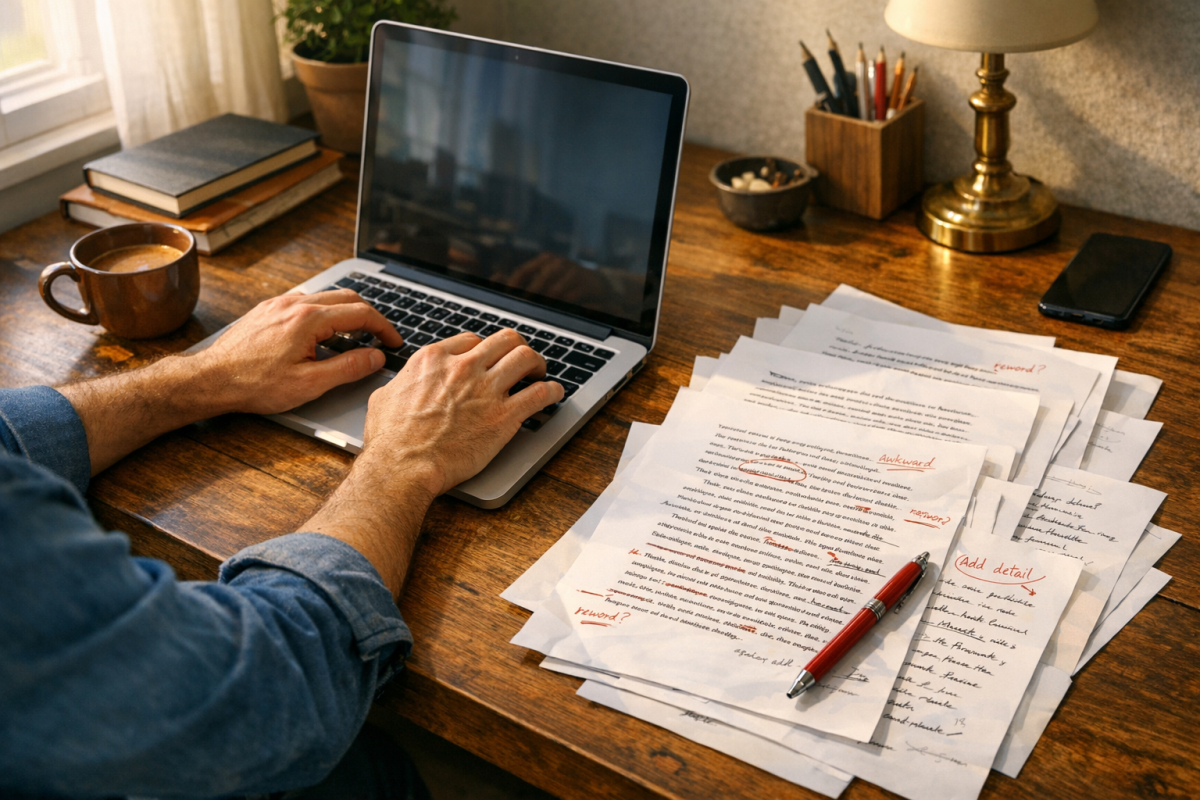

Where Human Editing Turns AI Content Into a Real Asset

One study found that AI-assisted content with human editing performed within 4% of human-written content in quality assessments. That gap is small enough to close, and it tells you something that matters: the editing part is not a formality - it’s where the work actually happens.

Not all edits carry the same weight for E-E-A-T purposes. Some changes are cosmetic - fixing awkward phrasing or shortening paragraphs. Those are fine for readability. But they don’t move the needle much. The edits that matter are the ones that inject something AI can’t generate on its own.

What meaningful editing actually looks like

Adding source citations is one of the most direct ways to strengthen a piece. AI tools can reference general knowledge. But a human editor can link to a study, a government data set, or a named expert. That makes claims verifiable instead of just plausible.

Real experience is another area where human involvement changes the character of a piece considerably. A sentence that says “in my testing, the results were inconsistent” carries a weight that a generated paragraph can’t replicate. First-hand accounts and observations are exactly what Google’s quality guidelines point to directly when they describe Experience and Expertise.

Checking claims is also an absolute must. AI models can state things with confidence that turn out to be outdated, incomplete, or just wrong. A good spell and grammar check process is only the beginning - factual accuracy requires a human eye.

Authorship signals matter too

Refining authorship signals is worth doing deliberately. A named author with a short bio, a link to their professional background, and a steady publishing history all affect how credible a piece looks to readers and search evaluators. These are things a human has to own and maintain.

The distinction worth keeping in mind is between editing that makes content readable and editing that makes it credible. Readable content is the baseline. Credible content - with citations, experience, verified claims, and a human attached to it - is what meets the bar E-E-A-T sets.

AI gets you a working draft. What you do with it after that determines if it qualifies as legitimately helpful content or just fills a page. If you’re looking at ways to build that credibility further, understanding what separates blogs that earn trust from those that don’t is a practical place to start.

AI Won’t Write Your Reputation - But It Can Help You Build It

That distinction matters more than most content strategies account for. The businesses winning in search aren’t picking between AI efficiency and human quality - they’re building workflows where both work together. The mindset change worth carrying forward is to stop asking if AI content is enough and start asking if your process is rigorous enough to make it so.

At BlogPros, that rigor is built into everything we do. If your content strategy isn’t holding up under today’s standards, there’s no better time to find out.

Start your free month with BlogPros - no contracts, no credit card, no commitment. Just content built, from the ground up.