Key Takeaways

- Purely AI-generated content ranks #1 on Google only 9% of the time, versus 80% for human-written content, per Semrush.

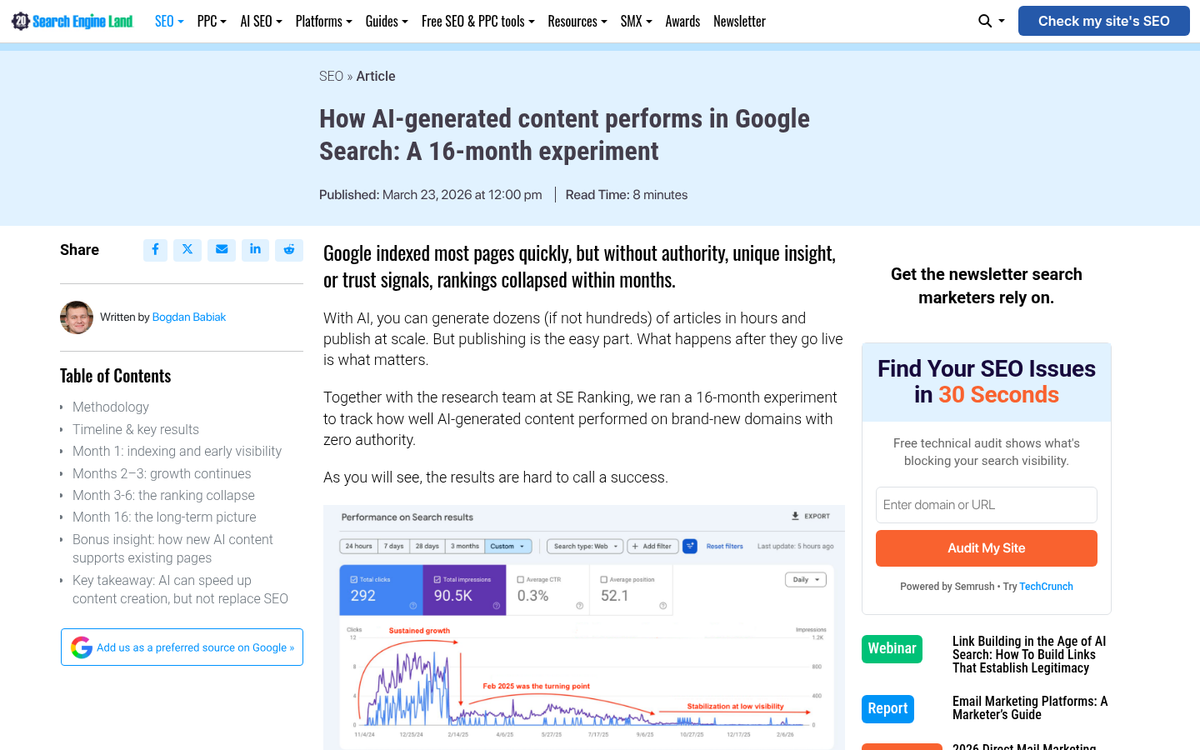

- Fully automated AI pages dropped from 28% to 3% in Google’s top 100 results over 16 months, showing rankings don’t hold.

- Ignoring reader comments due to automation damages community engagement, which is itself a Google ranking factor.

- Syndication risks include duplicate content issues, lower traffic, and higher-authority sites outranking your original content.

- AI works best as a drafting assistant with human editorial review, not as a hands-off replacement for genuine content creation.

Automation is a tool that, much like most tools, can be used for great benefit or great detriment to your blog. Used in moderation, it can save you quite a bit of time and energy. Used poorly, it can tank your search ranking and consequently your sales, with very little recourse short of a big site audit and review.

This has never been more relevant than it is. With AI writing tools now widely accessible, the temptation to automate content production is higher than ever - and so are the consequences of doing it wrong.

Honestly, most of the time I prefer not to automate anything past basic scheduling. Anything more than that means losing control I’d rather not lose, in exchange for a bit of extra time. Instead, I’ll just tell you what can go wrong and what to stay away from, so you can either go my path or have a list of things to watch for.

The Perils of Automatic Posting

Automatically posting blog content has problems connected with it. I’m not talking about hand-making content and scheduling it to post automatically, though; that’s fine. What I’m talking about is running an entire blog with a tool - whether that’s old-school spinning software or a modern AI content generator - that creates and posts content for your blog without actual human intervention.

There are two problems with this sort of blog management. The first has to do with the source and quality of the content you’re posting. The second has to do with how Google and your audience respond to it.

The Problem with Fully Automated AI Content

In 2026, the most common form of “automatic content” isn’t spun articles anymore - it’s AI-generated blog posts, published at scale with little or no human editing. Many site owners have gone all-in on this, using tools like ChatGPT, Claude, or AI blogging platforms to churn out dozens of posts per week.

The data tells a very clear story about how well this actually works:

- A Semrush analysis of 42,000 blog pages found that purely AI-generated content appeared in the #1 Google search position just 9% of the time, while human-written content held that top spot 80% of the time. That’s not a small gap - that’s a near-complete dominance by human-authored work.

- In a 16-month Search Engine Land experiment, only 3% of fully AI-generated pages remained in Google’s top 100 results as of early 2025 - down from 28% in the first month. Initial rankings don’t hold. Google gets better at identifying and devaluing this content over time.

- Userpilot actually pruned 847 blog posts - largely programmatically or AI-produced content - removing low-converting and low-traffic posts, and saw a 16% traffic boost as a result. Less content, better content, better results.

This doesn’t mean AI has no place in your workflow. 70% of bloggers use AI for drafting content - but over 60% of them still add their own editorial review before publishing; that’s the distinction that matters: AI as an assistant versus AI as a replacement.

Where things go wrong is when site owners skip that human layer entirely. The content ends up generic, doesn’t have enough genuine expertise or perspective, and fails to build any authority in Google’s eyes - especially as Google’s ranking systems increasingly reward content that shows first-hand experience and original thought.

Old Automation Problems Haven’t Gone Away Either

For those still running older auto-posting programs, the traditional dangers remain just as dangerous:

- Content scraped from the web and reposted is still intellectual property theft. The original owner of the content can have your site taken down, or pursue legal action against you.

- Spun content - where scraped articles are run through synonym-replacement software - is still identifiable by Google. “Body of work” becoming “corpse of work” is a classic example of why automated synonym replacement fails. Google has long understood spintax patterns, and this approach will earn you a spam label or a near-zero content value score.

- Bulk content purchased from ultra-cheap content mills remains a risk. While platforms like Fiverr and Textbroker do have quality writers, the lowest price tier content is still often barely readable and adds little SEO value.

Losing Touch with Community

There’s also the problem of socialization and community. When you’re automatically running a blog via software or unreviewed AI output, what happens when a comment comes in? Someone reads a piece you “wrote” and they ask you a question about it - a question you can’t actually answer, because you didn’t write it and might not have even read it.

Absolutely nothing happens. You don’t know there’s a comment, because you aren’t checking the site. You’re not paying attention to it past the hope that it’s generating links with residual value.

Any time you have a community built around a blog - or a set of social media profiles running on automatic posting, you’re taking a chance with that community. Many followers will simply leave. Many more will stop engaging. The loss of engagement itself is a ranking factor Google pays attention to. The whole thing spirals downward.

Facing the Lack of Intent

The problem with talking about the downsides of automatic posting is that most of the people doing it at scale don’t care. They’re aware of the tradeoffs. But they’re not trying to build an audience. They’re auto-generating content to populate shell sites used for tiered link building toward a main money site.

This black hat strategy is a non-stop cycle. Rankings for the money site rise as more sites are built, and drop as Google identifies and deindexes the network. You can’t fight back, because improving any individual shell site costs more than it’s worth. You just let them lapse and build more.

If that’s your goal, this post isn’t for you. I’ll stick with bloggers who have a blog and an audience, who are tempted to automate because it seems like an efficiency play - it isn’t - not at the scale or quality level most automation tools work at.

Syndication Troubles

One bit of automation that many don’t think of as automation is syndication. In most cases syndication is seen as a potentially helpful technique for bloggers. But it comes with dangers worth knowing.

The idea behind syndication is that you write a blog post and have it published across a network of partner sites. High-profile placements sound desirable - but the problem with syndication is that it’s not a guest post sending traffic your way. It’s a copy of a post already on your site. While Google does understand syndication and won’t always apply a duplicate content penalty when it’s implemented correctly, it can still trigger problems if the receiving site’s canonical tags are set up incorrectly.

Syndication also doesn’t give you much traffic benefit because the full post is right there on the other site. Why would a reader click through to your site when they can read the whole thing where they already are?

And then there’s the ranking problem. A bigger, higher-authority site syndicating your content will usually outrank your own version of that post in search. Google goes by the SEO authority behind each site - not who wrote it first.

Syndication is fine used sparingly. A handful of quality placements can earn you helpful backlinks. But if every post you publish is syndicated, you have nothing driving traffic to your own site.

A Better Idea

If you want to cut back on your own personal workload with your blog without resorting to techniques that will damage your site, you have two options.

The first is hiring a team of vetted writers - this remains the gold standard for outsourcing content. You can vet contributors, hold them accountable to quality standards, and make sure every post lands with genuine expertise. Good writers in your niche bring real industry knowledge that no AI tool can replicate from a generic prompt. If you’re not sure where to start, check out our guide on how to hire a writer off Freelancer or Upwork.

The second - and increasingly popular in 2026 - is using AI as an assisted drafting tool - not a replacement. The 60%+ of bloggers who use AI are doing so to speed up research, create outlines, or generate first drafts that a human writer or editor then shapes into something legitimately helpful. That workflow captures the speed benefit (cited by 70% of SEO teams as AI’s top benefit) without sacrificing the quality that actually ranks.

What doesn’t work is the hands-off strategy: prompt an AI, auto-publish, repeat. The data is unambiguous on this. Sites doing that are losing rankings - not gaining them. In fact, deleting low-quality content can actually increase your traffic if you’ve already gone down that path.

Being able to guide the content production, apply editorial judgment, and leave execution to skilled humans or supervised AI tools - that’s the strategy that builds long-term value. It costs more than hitting “generate and publish,” but it also keeps your traffic growing instead of quietly eroding while you wonder why your rankings dropped.