AI Overviews are Google’s AI-generated answer summaries that appear at the top of search results, pulling information directly from web content to give users a synthesized response before they’ve clicked a single link. Since rolling out more broadly in 2024 and continuing to evolve through 2025 and into 2026, they’ve become one of the most consequential features in search - not because they drive clicks, but because they replace them. Getting featured in an AI Overview means visibility without the visit. Getting ignored means your well-optimized page might not factor into the answer at all.

This creates a genuine tension for content creators. Traditional SEO thinking, and what NLP optimization is built on, assumes that helping machines understand your content more will reward you with better rankings and prominent placement. That logic held up reasonably well in a world of ten blue links. But AI Overviews don’t work like rankings. They pull from sources using a different reasoning - one that seems to weight trust, structure, and topical depth in ways that don’t map cleanly onto conventional optimization checklists.

So the question worth asking is whether NLP optimization actually influences which content gets surfaced in these AI-generated answers, or if it’s largely incidental - a best practice that helps in other ways but doesn’t move the needle here. That’s what this post works through: what the evidence suggests, where the gaps in our understanding still exist, and what it means for how you approach content strategy in a search environment that keeps changing the rules.

Key Takeaways

- AI Overviews cite pages from positions below #5 roughly 47% of the time, meaning rankings and AI citations follow separate logic.

- Semantic completeness scored r=0.87 correlation with AI Overview inclusion; high-scoring content was 4.2x more likely to be featured.

- Adding citations, statistics, and direct quotes improved source visibility in AI-generated results by over 40%, per GEO research.

- Sites using semantic structure appear in AI Overviews 43% more frequently than those without it.

- Over-optimizing for NLP signals can produce machine-readable but unhelpful content; genuine substance should take priority over checklist-driven formatting.

What AI Overviews Actually Look For (And Why It’s Not Just Rankings)

AI Overviews don’t pull exclusively from the top organic results. Research from Authoritas and Optimizely puts the overlap between AI Overview citations and top-10 ranked pages between 40% and 76%; it’s significant. But it also means a large chunk of citations come from pages that aren’t dominating the search results.

Research from Rich Sanger puts a finer point on this. Around 47% of pages cited in AI Overviews come from positions below #5 in organic search; it’s not a rounding error - it’s a pattern that suggests something about how Google’s AI layer evaluates content.

The gap exists because rankings and AI citations answer different questions. A ranking algorithm weighs authority, backlinks, and relevance to choose what page deserves to be seen. An AI Overview is trying to build a response that’s accurate, complete, and easy to understand - so it pulls from wherever it finds the best material for that job.

A page can rank lower and still get cited, as long as it has something the AI needs to construct an answer. What matters is whether your content fills a gap that higher-ranked pages leave open.

That distinction changes how you think about optimization. A higher ranking won’t automatically get you into an AI Overview, and being in an AI Overview doesn’t guarantee strong organic placement either. The two can go together. But they run on separate logic.

That’s why the conversation around NLP optimization and answer engine strategies matters here. Structuring content so relationships between concepts are easy for a language model to interpret is a different job than traditional SEO, and the data suggests it may carry more weight for AI citation than page authority alone does.

How Semantic Completeness Became the Dominant Ranking Signal

Semantic completeness has risen to the top when researchers look at what AI Overviews favor - it sounds technical, but the idea is easy. Content that is semantically complete covers a topic closely enough that a reader walks away with very few unanswered questions.

That means going past the main question to help with the related angles, the edge cases, and the nuances that come up around a subject. Word count is not the goal. The goal is leaving minimal gaps.

The data behind this is hard to ignore. An analysis of 15,847 AI Overview results found a correlation of r=0.87 between semantic completeness scores and AI Overview inclusion. Content that scored 8.5 out of 10 or higher on semantic completeness was 4.2 times more likely to get pulled into an AI Overview than content that scored lower - it’s a big gap, and it seems like something deliberate in how these systems review content.

AI Overviews are built to answer questions directly. Google won’t pull a source that covers only part of a topic when another source covers the whole thing. Completeness signals that the content is reliable enough to represent as an answer.

In practice, this looks like content that anticipates follow-up questions. If someone searches for how a process works, a semantically complete page explains the process, covers why it works that way, addresses what can go wrong, and connects it to related concepts the reader might not have thought to ask about yet.

Topic modeling tools can score your content for this. Tools that analyze entity coverage and topical depth give you a rough measure of how your content compares relative to what search engines associate with a given subject - it’s worth running your content through one to see where the gaps are. If you also want to strengthen how Google perceives your authority, improving your blog’s E-A-T score is a closely related step worth taking.

Semantic completeness is what separates content that gets referenced from content that gets skipped. And it’s a very different frame from the one most SEO practitioners have been working with for the past decade.

The Difference Between NLP Optimization and Traditional Keyword SEO

Traditional keyword SEO is built around frequency. The idea was to use the right words enough times so search engines would connect your page to a query.

One helpful way to see this difference is through entity recognition. NLP tools don’t read words; they find people, places, concepts, and the relationships between them.

Co-occurring terms matter here as well. Language models are trained to expect concepts to appear together within a topic. If those related concepts are missing, the content can read as thin or incomplete - even if it’s well-written on the surface.

Intent is the other big piece. Keyword SEO asked what the user was looking for. NLP goes a step further and asks what the user actually wants to know. That distinction shapes how you structure information, which questions you answer, and how much depth you go into on any given point.

| Focus Area | Traditional Keyword SEO | NLP Optimization |

|---|---|---|

| Primary goal | Match keywords to queries | Communicate meaning to language models |

| Content structure | Keyword density and placement | Semantic relationships and topic coverage |

| How intent is handled | Implied through keyword choice | Addressed directly through question-and-answer framing |

| What gets rewarded | Repetition of target terms | Conceptual completeness and entity clarity |

None of that means keywords are irrelevant. They still help with indexing and basic relevance tells. But for AI Overviews, the bar is higher - your content needs to hold up as a source that a language model can extract a coherent, accurate answer from.

What the GEO Research Says About Citations, Stats, and Quotes

A study from Princeton and the University of Delhi took a close look at what they called Generative Engine Optimization, or GEO. Where traditional SEO is about getting pages to rank in a list of links, GEO is specifically about getting your content to appear inside AI-generated answers; it’s a different goal, and it turns out the strategies that work are different too.

The scientists tested a number of writing strategies to see which ones made sources more likely to get cited in AI replies. The results were pretty striking. Adding citations, statistics, and direct quotations improved source visibility by over 40% in AI-generated results.

That’s not a small bump - it seems like something actual about how AI systems review content before pulling from it.

The likely reason is that AI models are trained to produce accurate and honest replies. When your content includes verifiable data or references to credible sources, it reads as more reliable to the model. You’re basically giving the AI something it can use without going out on a limb.

Quotations seem to play a similar role. A direct quote from a named expert signals that a person with knowledge said something. That evidence carries weight in a way that unsupported claims don’t - even if those claims are accurate. Using quotes in articles is worth thinking through carefully for this reason.

A lot of web writing skips citations and data because it feels academic or unnecessary. But if AI systems reward evidential writing, that habit could be worth reconsidering.

It doesn’t mean every paragraph should have a footnote - it means that when you make a factual claim, backing it up with a number or a named source could do more work than you’d expect. The GEO research frames this as a structural difference in how AI engines process content compared to traditional search crawlers.

Traditional search rewards links and relevance signals. AI engines appear to reward content that looks like it can be trusted to be accurate, and citations are one of the clearest ways to signal that. This connects to broader questions about how you structure your content to communicate authority at every level.

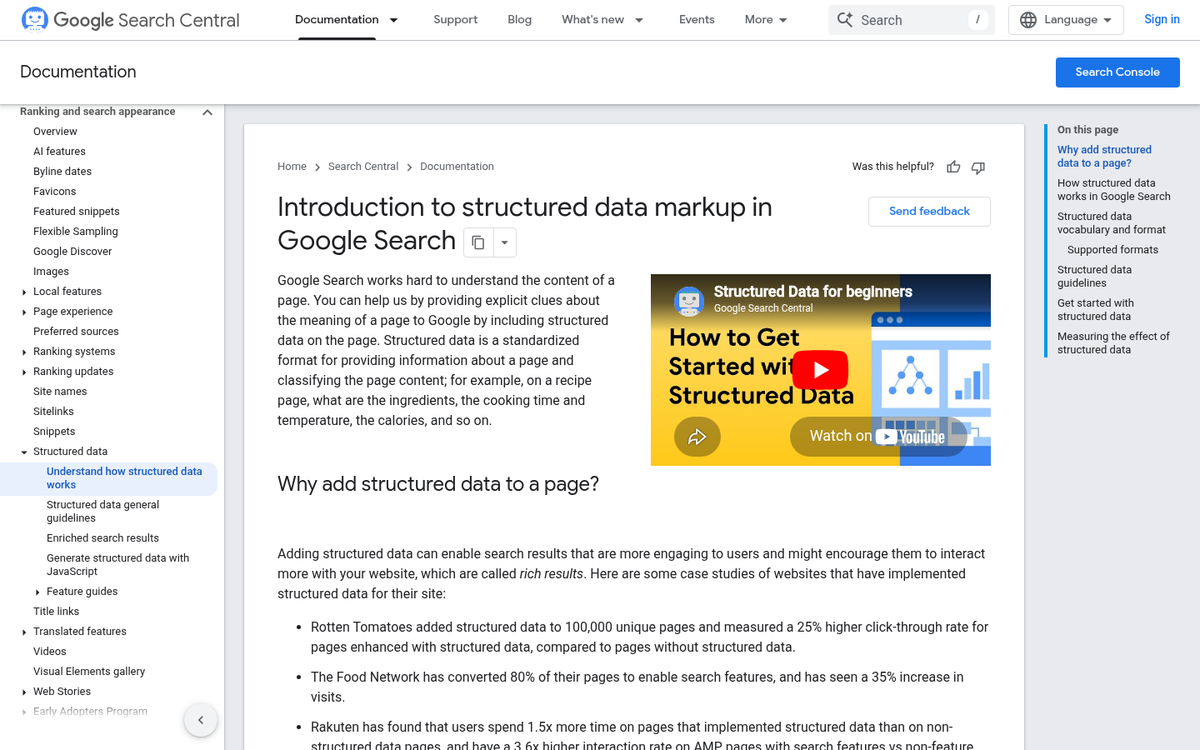

Semantic Structure and How It Affects AI Overview Frequency

Sites that use semantic structure appear in AI Overviews 43% more than the ones that don’t; it’s a gap, and it seems like something worth mentioning on the structural side of your content.

Semantic structure is about giving your content a logical shape that humans and AI systems can follow. Think of it as a page that answers questions in the right order, with each section labeled to scan. Heading hierarchies are a big part of this - an H1 for the main topic, H2s for sections, and H3s for supporting facts within those sections.

Schema markup factors in too - it gives search engines extra context about what a piece of content actually is - an article, a FAQ, a how-to guide. When Google’s AI systems pull content to generate an overview, structured markup helps them understand what they’re looking at and whether it fits the query.

Well-defined sections matter just as much. Content broken into digestible, self-contained chunks is easier for AI to extract and reference. A paragraph that directly answers a question without preamble is far more likely to get pulled into an overview than one buried in a long block of text.

| Structural Element | What It Does | Likely Impact on AI Overview Inclusion |

|---|---|---|

| Heading hierarchy (H1, H2, H3) | Organizes content into a scannable structure | High |

| Schema markup | Labels content type for search engines | High |

| FAQ-style sections | Answers specific questions directly | High |

| Short, focused paragraphs | Makes content easier to extract | Medium to High |

| Internal linking with descriptive anchor text | Builds topical context across a site | Medium |

These elements work together instead of in isolation. A page with great headings but no schema and meandering paragraphs will still lose ground to one where everything is aligned. A strong structure is the foundation, and measurable content performance tends to follow from it.

The Click-Through and Visibility Gains Tied to NLP Content

There’s a tension worth naming here. Getting cited in an AI Overview is a win. But it doesn’t automatically send traffic your way. Google can summarize your content, answer the user’s question and move on - all without a single click going to your page. The more interesting question is whether NLP-optimized content changes that equation at all.

The data from Onely in 2025 seems like something worth mentioning. Their research found that semantic SEO and NLP structuring are associated with a 26% increase in click-through rates.

Part of the explanation can depend on how well-structured content gets represented in search results. When a page uses natural language patterns that match how users actually phrase questions, the title and meta description are likely to be a better match for what was typed. That closer match builds enough trust to earn a click.

There’s also the snippet angle. NLP-optimized pages tend to produce cleaner, more coherent excerpts when Google pulls from them. A crisp, readable summary in an AI Overview drives curiosity instead of killing it - especially when the answer hints at more depth on the page itself.

It also helps that users who find a page through a semantically rich AI citation are further along in their thinking. They’ve already had part of their question answered, so if they click through they’re more engaged and likely to stick around. That downstream behavior matters for how search engines continue to look at a page’s value.

The 26% figure doesn’t mean every NLP-optimized page will see those gains. Traffic results depend on the search intent, the competition for that snippet and how well the page content follows through on what the AI Overview implies it has. The relationship between AI visibility and clicks is uneven and the type of query matters quite a bit.

Where NLP Optimization Falls Short and What to Do Instead

There’s a danger that chasing semantic scores and entity density gives you content that’s bloated, over-structured, and reads like it was written for a machine instead of a person.

That pattern shows up more than you’d think. Writers add more headers, more FAQ blocks, more keyword variations - and the content technically “looks” optimized while becoming harder to read. You end up with something that passes a checklist but loses the thread of what made it helpful.

The tension worth sitting with is this: optimizing for form versus optimizing for substance. Form is the structure - the semantic HTML, the entity mentions, the passage-level clarity. Substance is whether the content actually answers something well. AI systems are getting better at telling these apart, and readers always could.

The honest question to ask is whether your goal is to be “AI-readable” or legitimately helpful. In the best cases, those two things overlap. But when they don’t, helpful should win every time. A well-written explanation of a tough topic will have the entities, relationships, and structure that NLP models respond to - without any deliberate engineering.

Over-optimization also tends to flatten a writer’s voice. When every paragraph is reverse-engineered from a semantic tool, the content starts to feel like a template. That sameness is a problem because AI Overviews draw from sources that look credible and authoritative - and formulaic writing doesn’t read as either. Automating your blog content can make this problem worse, pushing you further toward template-driven output that lacks genuine authority.

The better frame is how to write something that a knowledgeable person would find helpful - not how to optimize for NLP. Structure it well, answer the question directly, and use language that a human would use to explain something to another human. The optimization tends to follow from that.

There’s nothing wrong with using NLP tools to audit your content after the fact. Just don’t let those scores drive the writing itself. If you’re thinking about making your blog work harder for you, focusing on genuine substance over surface-level optimization is one of the most durable strategies available.

So, Does NLP-Optimized Content Actually Win in AI Overviews?

To get started, pick one high-value page and run it through a semantic gap analysis - look for related questions, subtopics, and entities your content is missing. Then review your formatting: are your answers easy to extract? Would Google’s systems know which paragraph to pull for a featured response? Small structural changes paired with genuine depth can move the needle faster than expected. If you’re also thinking about how long it takes to rank new content, understanding these structural signals is a good place to start.

That’s the work we do at BlogPros - and we’ve built our entire process around it. Every piece of content we produce combines AI-powered efficiency with human editorial oversight and full AEO optimization - like schema markup, semantic structuring, and the authoritative writing that answer engines actually cite. If your content is being passed over in AI Overviews, it’s a content engineering problem - and a solvable one. Start your free month with BlogPros and see what content built for the age of answer engines looks like - no contracts, no credit card, no commitment.