Google began testing this technology under the name Search Generative Experience (SGE) in May 2023, giving early access to a limited group of users through Search Labs. By May 2024, AI Overviews rolled out broadly across the United States, moving from experimental feature to a permanent fixture in search. The implications were immediate - some pages saw surges in brand visibility and others - even though years of established authority, found themselves largely absent from the new format.

For anyone making content online, the main question quickly became: what actually gets cited? Google has been characteristically measured in its public input. But a pattern is emerging from the pages that seem to appear inside AI Overviews. Content grounded in genuine, first-hand experience - testing, direct observation, authentic personal perspective - seems to hold an advantage. This isn't simply about ticking an E-E-A-T checkbox - it seems to be one of the clearest differentiators between content that gets surfaced and content that gets skipped.

I'll explore why first-hand experience may carry weight in AI Overview eligibility, what that looks like in practice, and how this signal can meaningfully shape the way you strategy content going forward.

Key Takeaways

- Google's AI Overviews favor content with genuine first-hand experience, making it a key differentiator for citation eligibility.

- 96% of AI Overview citations come from sources with verified E-E-A-T signals, with a strong 0.81 correlation to citation eligibility.

- Over 99% of AI Overview citations come from pages already ranking in the top 10 organic results.

- Long-tail queries of eight or more words are seven times more likely to trigger AI Overviews, where experience-based content excels.

- Google's E-E-A-T verification became roughly 27% stricter in 2025, particularly for health, financial, and product review content.

What E-E-A-T Actually Means for AI Overview Selection

E-E-A-T stands for Experience, Expertise, Authoritativeness and Trustworthiness. Google uses this framework to judge if a piece of content deserves to rank well - and now, to choose if it gets pulled into an AI Overview.

Most are familiar with the last three letters. Expertise, Authoritativeness and Trustworthiness have been part of Google's quality guidelines for years. The first "E" - Experience - is the newer addition, and it changes things in a meaningful way.

Experience asks a different question than expertise does. Expertise is about knowing a subject. Experience is about whether you've lived it. There's a difference between a financial writer who understands mortgage theory and a homeowner who has gone through the process themselves.

Google's systems are built to detect that difference in the text itself. They look for content where the author has done the thing they're writing about - used the product, visited the place, navigated the process. That content tends to include details that are hard to fabricate.

This matters quite a bit for AI Overviews specifically. One study found that 96% of content featured in AI Overviews comes from sources that carry verified E-E-A-T signals, with a correlation of r=0.81 between those signals and citation eligibility; it's a strong relationship - not a loose one.

The framework is closer to a filtering system for what gets surfaced in AI-generated answers than a content quality checklist. Within that system, first-hand experience has become an especially weighted factor.

That's because Google is increasingly trying to surface content that can add something a language model can't produce on its own. A model can summarize facts. But it can't replicate the perspective of a person who has actually been through something.

This framework is the foundation for everything else covered here. The next step is to look at how Google actually detects whether experience signals are present in a piece of content - and what that looks like in practice.

How Google Detects Genuine Experience Signals in Content

Google doesn't take your word for it if you claim to have first-hand experience with something - it looks for proof embedded in the content itself, and that proof tends to show up in very specific ways.

Author credentials are one of the more obvious tells. A byline linked to a person with a verifiable background in the subject gives Google something to work with; it's different from anonymous content or a generic "staff writer" attribution with no supporting context.

Credentials alone don't get you far, though. The content itself needs to carry the weight of experience. Think about the things that only come from actually using a product or going through a process. Specific friction points, unexpected outcomes, and observations that don't appear in the manufacturer's description - these are the things generic content misses.

Original data and statistics are especially helpful here. Content that includes research, survey results, or original findings tends to get cited in AI Overviews at a noticeably higher rate than content that synthesises what others have said. The likely reason is that original data signals a primary source instead of a secondary one. Google's systems are built to trace information back to its origin, and content that's the origin holds more weight.

There's also a pattern recognition element to this. Generic content has a recognisable shape - large claims, surface-level tips, and conclusions that could apply to almost anything. Experienced content breaks that pattern because it includes the specificity that doesn't come from research alone. The name of a setting buried in a software menu, a workaround that only becomes necessary in certain situations, a comparison based on side-by-side use.

Off-page tells matter too. When other credible sources reference your content or your author's work, that reinforces the on-page signals instead of replacing them. The two work together, and neither is enough on its own. If you're wondering why competitors show up in AI Overviews and you don't, the gap often comes down to exactly this combination of on-page and off-page experience signals.

The Connection Between Organic Rankings and AI Overview Citations

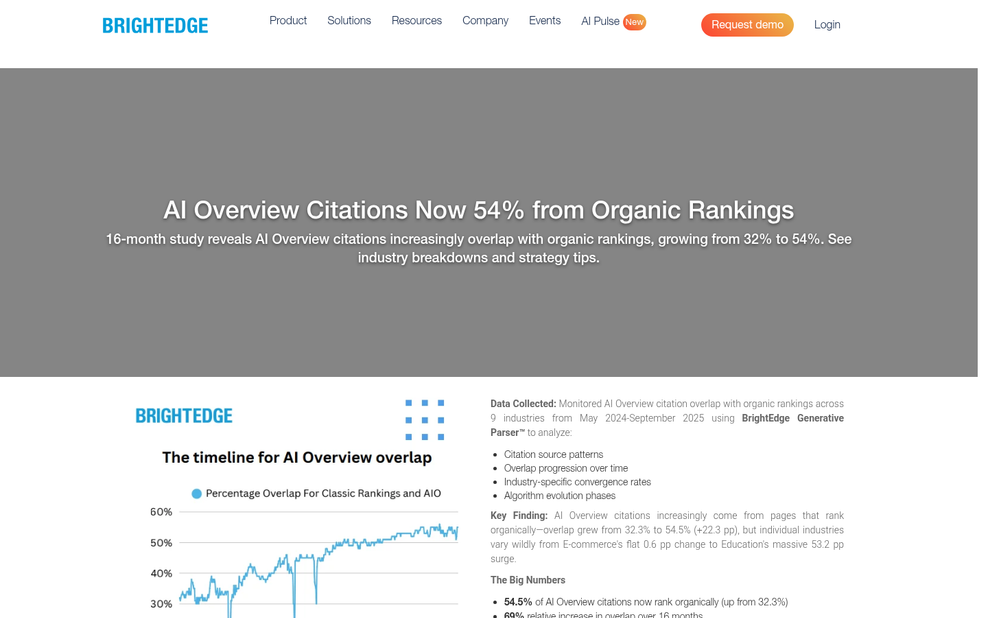

There's a striking pattern in how Google pulls content into AI Overviews. Studies show that over 99% of AI Overview citations come from pages already ranking in the top 10 organic results, with a 93.67% overlap between those two groups; it's not a small correlation - it's almost a one-to-one match.

What that means in practice is that experience-backed content doesn't earn AI Overview citations directly - it earns strong organic rankings first, and those rankings are what cause citations. The path runs through traditional search performance, not around it.

First-hand experience content matters because it builds the depth and credibility that Google rewards in organic rankings. A page written by a person who has actually used a product, visited a place, or worked through a process tends to be more thorough and honest than one assembled from secondary sources. Google's systems are increasingly able to recognise that difference and rank accordingly.

This does raise a question for newer sites. If AI Overview citations depend on top-10 rankings, and top-10 rankings depend on the authority that takes time to build, then newer publishers face a longer road than established ones - it's not a closed loop. But it does mean that first-hand experience content needs to do more than signal authenticity - it needs to compete on every other ranking factor too.

The good news is that genuine experience content furthers that process. Pages that cover a topic from a lived perspective draw links, generate engagement, and hold rankings more steadily than thin or aggregated content. First-hand accounts are harder to replicate, which gives them staying power in competitive search results.

The relationship between rankings and AI citations makes organic search performance the gateway to AI visibility. Content that earns one tends to earn the other, and content built on experience has a structural advantage in earning both - it's worth remembering before treating AI Overviews as a separate target to chase.

Why Stricter E-E-A-T Verification Raises the Stakes in 2025 and Beyond

Google's approach to verifying E-E-A-T signals became noticeably stricter in 2025 - about 27% stricter compared to 2024, based on documented quality rater guideline updates. That means content that passed the bar last year may no longer meet the threshold.

The tightening is most visible in what are sometimes called "Your Money or Your Life" categories. Health pages, financial advice, and product review content face the closest scrutiny because the consequences of inaccurate or shallow information in these areas can directly affect someone's life or wallet. A product review written without firsthand use, or a health post with no traceable author credentials, now has less room to hide.

For product reviews specifically, the difference between a real-world assessment and a rewritten spec sheet has become much harder to paper over. Google is better at detecting when a review contains no original information - no mention of use cases, no personal observations, nothing that couldn't have come from the manufacturer's website. That content used to rank well enough to get seen; it's less likely to do so now. If you're struggling to get more reviews on your product, firsthand detail is what separates credible content from noise.

Solo creators and smaller content teams run into the same blind spots. They have topical coverage and keyword placement but forget to show who is behind the content and why that person is qualified to write it. An author bio that lists credentials without connecting them to the content's subject is one of the most common gaps.

Another gap worth mentioning is the absence of first-person details within the body of the content itself. An author page can say someone has ten years of experience in a field. But if the post reads like it was assembled from secondary sources, the on-page evidence doesn't support the claim. Both pieces need to align. This is also why spun content that passes Copyscape still fails to build authority - it lacks the original perspective that signals genuine expertise.

Finance content runs into a slightly different version of this problem. Regulatory and compliance concerns sometimes push writers to keep things generic, which strips out the personal perspective that E-E-A-T verification is actively looking for.

Long-Tail Queries, Specific Intent, and Where Experience Shines

Queries with eight or more words are seven times more likely to trigger an AI Overview than shorter searches. That is an actual gap, and it tells you something helpful about what Google thinks AI Overviews are for.

Longer queries come from people who already know a bit about a topic and want a precise answer. Someone searching "how long does it take to recover from a tibial plateau fracture with physical therapy" is not browsing. They want an answer from a person who has been through it or worked with people who have.

Experience-based content has a natural benefit here. Generic, aggregated answers tend to fall short with these queries because they stay at a surface level. A person who has actually done something can speak to the facts that make a long-tail question worth asking.

Consider the content on your site and which pieces draw those longer, more deliberate searches - it's usually the how-to content, the troubleshooting guides, the personal walkthroughs, and the comparison pieces built around a scenario. These formats invite questions and they reward writers who have direct experience to draw on.

A short query like "running shoes" could mean almost anything. A longer query like "best running shoes for wide feet with overpronation under $120" has a goal. The person asking it wants depth, and they want it to apply to their situation.

Experience-based content satisfies that need in a way that pulled-together summaries can't. When a writer has actually tested something, dealt with a constraint, or made a choice in the same circumstances, the answer carries facts that land differently.

The content most likely to earn an AI Overview placement for long-tail queries is the content written closest to the experience itself. The more specific the question, the more that proximity to the experience matters. Tools like a People Also Ask outline generator can help you map out those specific angles before you write.

Practical Ways to Signal First-Hand Experience Across Your Content

There are some easy things you can do to make your experience visible on the page.

Start with process facts. If you tested a product, describe the steps you took and what you saw along the way. Readers and AI systems alike respond to specifics like "after two weeks of daily use" or "when I tried this on a low-powered device, the results were noticeably slower." These facts are hard to fake.

Original findings carry weight too. If you ran your own numbers, grabbed feedback, or came to a conclusion that's different from what everyone else is saying, write that down. A personal observation framed as your own is more credible than one that just echoes what already exists online.

Your author page is part of this picture as well - it should list credentials in plain language - what you've done, what you've built, and what you've worked on. A sparse or generic bio does nothing to support the content you've written.

The table below shows the difference between weak and strong experience tells so you can see where your content might need some work. If you're unsure whether your writing reads as genuinely human, it helps to know the biggest tells that content was written with Claude - many of the same patterns apply to AI-generated text more broadly.

| Content Element | Weak Signal | Strong Signal |

|---|---|---|

| Product review | Lists features from the manufacturer | Describes personal use over a set time period |

| How-to guide | Generic step-by-step with no context | Includes what went wrong and how you fixed it |

| Opinion piece | Restates common views without a position | Presents a conclusion drawn from your own research |

| Author bio | No relevant background listed | Names specific projects, roles, or direct experience |

Content that draws on lived experience is not about adding more words - it's about putting the right facts in the right places so your perspective comes through as clear and traceable.

First-Hand Experience Isn't a Trend - It's the New Table Stakes

Before adding anything new to your content library, audit what already exists. Look for pieces that describe a process without ever having followed it, compare products the writer never tested, or recommend services based on aggregated opinion instead of direct use. These are the experience gaps that quietly suppress eligibility - not because the information is wrong, but because nothing in the content confirms it was lived. Closing those gaps in existing content will deliver more results than making something new from scratch.

The trajectory here is clear. As AI search continues to tighten the signals it uses to evaluate credibility, content that can't show firsthand knowledge will face compounding disadvantages - lower rankings, fewer citations, and diminishing visibility in the surfaces that matter most. The writers and businesses that treat experience as a content asset - not an afterthought - are the ones being positioned well for what comes next.