Key Takeaways

- Keyword density is not a confirmed Google ranking factor; top-ranking posts average only 2.5 keyword uses per 1,000 words.

- Google’s semantic search and AI integration means content is evaluated for meaning and helpfulness, not exact keyword repetition.

- There is no single correct keyword frequency; use keywords naturally as your writing requires, without obsessive counting.

- Creating multiple similar posts targeting slight keyword variations backfires, as Google recognizes and devalues duplicate-topic content.

- Providing genuine value around your topic matters most; thin or keyword-stuffed content, whether AI-generated or human-written, performs poorly.

Keywords are very important to modern day SEO. But not in the way you might think. Part of the reason people get a false impression is because they’re reading information about it online without regard for publication date.

Forgive me while I go off on a slight tangent before we’ve even started. The web is a great place full of interesting information on every subject imaginable. One of the most great parts of it is that in large part nothing ever disappears. You’ll find the exact specifications for a product that hasn’t been manufactured in 15 years. You’ll find ancient blogs and forums. Even if it’s not live, archives like the Wayback Machine cache.

At the same time this hurts modern readers who aren’t paying enough attention. In a fast moving field like SEO, changes can be big and abrupt. Techniques that worked a few years ago - say, 2010 - no longer work. If you’re reading an old post about how to get ahead and it’s giving you advice based on the internet from the early 2000s, you’re going to be in for a bad time. A lot of the techniques no longer work. But they can also irrevocably damage the potential of a site.

I bring this up because the usage of keywords in content is one of the things that has changed quite a bit over the last decade and a half and out of date information to guide how to use them is likely to hurt you - it won’t be very devastating unless you lean into keyword stuffing or something of that nature. But it won’t help you.

Keyword Density

Keyword density is the name for the concept of using your target keyword a number of times, depending on how long the post is. For example, you could be going for a keyword density of 4 per thousand. That means in a 1,000-word blog post, you want your exact keyword mentioned four times.

You’ll still see this concept referenced across SEO tools and content platforms. Yoast, one of the most commonly used WordPress SEO plugins, recommends a keyword density between 0.5% and 3%. Writesonic similarly recommends 1-4 keywords per page at a rate of roughly 1-2 keywords per 100-150 words, putting its recommended range at 0.5%-2%. These are basic soft guidelines. But they are guidelines - not hard rules baked into Google’s algorithm.

Using an example of 4 per thousand is actually a very light number. I’d see requests for a density of 4 per 250, or more - this grows dangerously close to keyword stuffing and it comes with a connected mistake.

See, a single keyword may actually be an important phrase, a long-tail target keyword that’s made up of as many as half a dozen individual words. When you use one word five times in 500 words, it can be a little crowded. But it’s not too bad. If you use a six-word phrase five times in 500 words, it can become quite a bit more intrusive.

Part of the reason for this is basically that long tail phrases are harder to work into sentences.

The fact is, keyword density is not a confirmed ranking factor in SEO. Google has said as much since at least 2011, when they were already publicly debunking the idea that mentioning a keyword a number of times would help rankings. More recently, a SpyFu study looking at 42 articles across 5 SERPs found that top-ranking posts used their target keyword only 2.5 times per 1,000 words on average - and 12 of the posts didn’t use the exact keyphrase at all. But still ranked on page one. Keyword usage should be natural. You can tell that this post is about the number of times you should use a keyword in a blog post. But you don’t see me hammering an exact phrase over and over. And you can find this post ranked in Google search for the concept.

Semantic Search and AI-Powered Understanding

Part of the reason this works is because of what is called Semantic Search. Semantic Search is a way of parsing language meaning instead of vocabulary. It’s a way of using natural language instead of a “search engine dialect” to parse queries.

For example, if you wanted to find out what the best pizza place in Chicago is, you have two ways of asking Google. You might type in an optimized query, something like “Best pizza Chicago” - optimized based on your years of using the internet and your knowledge of how search engines used to work. In the past, if you used extraneous words, the search engine would work to include sites that used those words and would serve you sub-par results because of it. If you used different words, you might miss the main target of your query because the information didn’t use that word.

This caused a world where as a searcher, you would have to pare down your query to the basic keywords you wanted to find. At the same time, any marketer who wanted you to find them would have to guess what query you would want to use - educated guessing via keyword research - and would have to use that exact keyword. It was a sort of back and forth exchange of a search language.

Older users, used to being able to ask directly, had a harder time with search engines. They would type in full questions, like “what is the best pizza place in Chicago?” With the old style of search parsing, they would only get sites that used that exact phrase, which might not have been the top value results.

These days, if you type in a full question into Google then you’ll get accurate and helpful results. They present you with the websites that answer your question - even if those websites don’t actually have those exact words in them.

This has accelerated dramatically. Google’s integration of large language model technology into its search systems - most visibly through AI Overviews, which rolled out broadly in 2024 and continued expanding into 2025 and 2026 - means the engine is now doing something closer to genuine reading rather than simple keyword matching. It’s looking at whether your content actually answers the question being asked - not if you’ve repeated a phrase enough times. Voice search, which has been mainstream for years through assistants like Siri, Google Assistant and others, further pushed this change toward natural language processing long before AI Overviews arrived.

The Modern Way of the Keyword

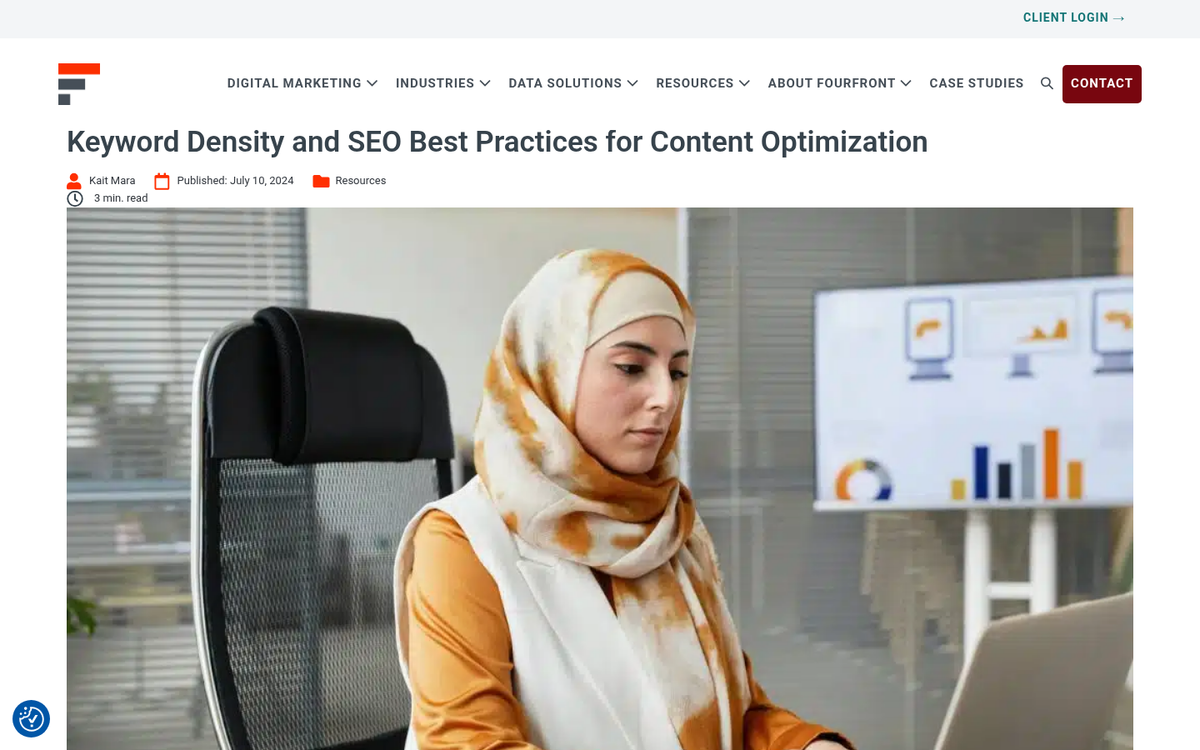

Modern keywords are not meant to be an exact science. Keyword research is great and it still works as a way to get ideas and a core focus. But they aren’t the be-all and end-all of SEO like they used to be.

Rule #1 with keywords is that you shouldn’t worry too much about close variations. There are two facets to this. The first is that you don’t need to work to remove variations from your articles in an effort to keep them pure. All you’re doing is making it a little harder for less common turns of phrase to find you. You don’t need exact phrases to show up in search. But it helps on a small level to have them.

The flip side is that you don’t need to work to include every one of the variations you can think of. Google understands language well enough to know synonyms and will be able to find helpful articles even without the presence of those keywords. If you write a post about great pizza joints in Chicago and someone runs a search for the best pizzerias in Chicago, your post will still show up because Google understands that pizzeria and pizza joint are the same.

One word of caution here is that you can’t get away with creating a dozen posts about the same topic with slightly different keywords. Google will see that you have these very similar posts on your site and they will all become less helpful in the eyes of the search engine. If you wrote two top 10 articles about pizza in Chicago, one about pizzerias and one about pizza joints and they have the same 10 places on them, Google knows what you’re doing. One or more of the posts will be more or less ignored.

Rule #2 with keywords is that you don’t need to obsess over density. If you’re counting the exact number of times a keyword appears in your post for any reason other than to make sure that you haven’t accidentally stuffed it, stop and rethink your strategy. That said, there are some loose helpful guidelines worth learning about. One common recommendation is to use your keyword roughly once every 100 words for shorter posts in the 500-700 word range and dial that back to around once every 300 words for longer content. Yoast’s 0.5%-3% range is a basic sanity check. The important word there is sanity check - it’s a way of catching obvious problems - not a formula for ranking success.

The number one job of a keyword is to give your post a main focus. The keyword is the topic you’re writing about. Beyond that, it’s just something you’ll have to use as you write. If you’re actually writing about the topic, it’s going to come up; it’s just how writing works. Sometimes you’ll need to write with a high degree of specificity, which is going to need the keyword more. Sometimes you don’t need to be and can indirectly reference it. So long as it’s present and the content legitimately addresses the topic, that’s what matters.

Rule #3 with keywords is that you’ll have to provide value centered around the topics. Value, as determined by a combination of Google’s intelligent analysis, their human quality raters and signals like links and engagement, is what determines how well a post does - this has always been true in principle. But it matters more than ever. With AI Overviews pulling answers directly from content and presenting them at the top of search results, thin or repetitive content gets bypassed entirely. Google isn’t randomly picking which posts to promote - it’s increasingly picking content that most completely answers what the searcher actually wants to know.

Whenever I choose a topic to write about, the first thing I do is look at the other posts out there about the same topic. I see what they say, what perspectives they take and how deep they look into the topic. Then I get to work outlining my own post in a way that covers the same ground more thoroughly, more accurately, or from a more helpful angle. The last thing I need to care about is the number of times I use a random keyword.

Rule #4 with keywords is that you should use keywords in meta data. Meta data is like the elevator pitch for your post and it’s meant to be a quick description of what the goal is. That means it’s a prime location for your keyword. Include it specifically, in a sentence that explains the context and you’re good to go. You don’t need to include it more than once, because you don’t have space and it’s redundant. Writing a good meta description is worth taking the time to get right.

A Note on AI-Generated Content

It would be dishonest to talk about keywords in 2026 without addressing the elephant in the room: AI writing tools. Tools like ChatGPT, Claude, Gemini and dozens of content platforms have made it trivially easy to generate large volumes of text. Some are using these tools to flood the web with keyword-optimized content at scale, hoping volume compensates for quality.

It doesn’t. Google has been explicit that the standard it applies is the same regardless of who or what wrote the content - it evaluates whether the content is helpful, accurate and written for readers instead of search engines. AI-generated content that’s shallow, repetitive, or stuffed with keywords performs just as poorly as human-written content with the same problems. The tools themselves are neutral; the quality of what you produce with them is what counts. If you use AI to help you write something legitimately helpful and accurate, that’s fine. If you use it to churn out thin pages targeting keyword variations, expect those pages to go nowhere.

Get it Together

The final answer to the title question still has yet to be expressly stated, so here it is: there’s no single right number. There’s no magic figure that works for every post. The answer, in plain English, is that you should use your keyword as many times as your writing legitimately calls for it, without forcing it or counting it. Loose guidelines like 0.5%-2% density are worth keeping in the back of your mind as a way to avoid obvious problems. But they are not targets to chase. If your post is actually about the topic you want to rank for, written well and with substance, then you’ll rank for it.

Unfortunately, that means you’ll have to have a writer - human or AI-assisted - who is fluent in natural web English. Technical proficiency is not the same as fluency and search engines have become very good at telling the difference between content that legitimately informs and content that’s going through the motions of SEO. How you write directly affects your SEO results in ways that keyword counting alone will never capture.

Hiring ghostwriters can help with this, as long as you find good ones. But that’s a tough proposition in itself. Knowing how to hire a writer off Freelancer or Upwork is a skill worth developing. You also, of course, need to pay them fairly - but that’s a whole other conversation.

1 response

Thoughtful replies only - we moderate for spam, AI slop, and off-topic rants.

Great post man! This was easy to read. And I learned something new and very useful.