There are over eight billion people on the planet. Imagine, for a moment, if every one of them had an Internet connection and dedicated two hours of their time each day to looking at websites. That’s two hours of attention every day for each person; 16 billion hours worth of attention. How hard would you have to fight to get a share of that attention?

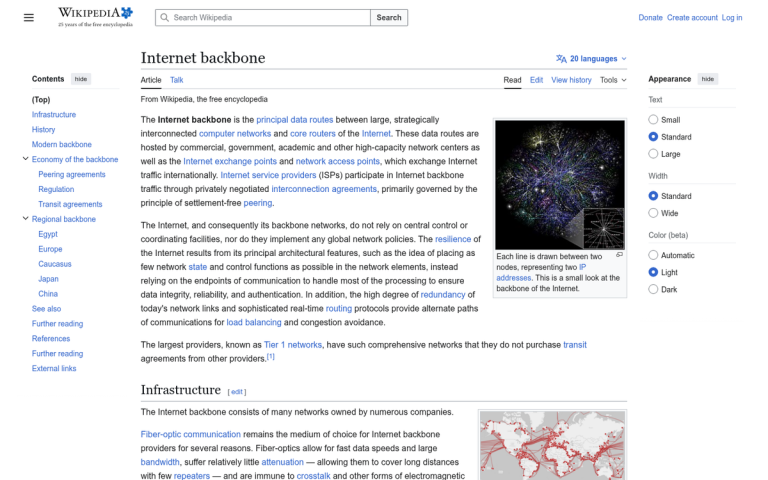

Consider this: there are well over one billion websites alive and active today. That’s a massive number. And that’s just the number of websites that are alive and active - not individual pages. Google’s index alone contains hundreds of billions of unique web pages, and there are plenty of corners of the internet Google never even touches.

Your website is one of millions competing for a finite amount of user attention. Worse, you’re competing for a small subset of that attention - users who will actually be interested and will convert. And it’s getting harder. Over 58% of all Google searches in the US are now zero-click searches, meaning users get their answer directly from the search results page and never visit any linked website at all.

Why bring this up? It’s not just to convince you of the ultimate futility of existence in a world where content is being created faster than it can be consumed. The point is, it is possible to attract that attention. All you need to do is, well, do everything exactly right. So, why isn’t your blog gaining its share of that attention?

- Duplicate content from dynamic URLs and poor canonicalization is a commonly overlooked technical SEO problem hurting rankings.

- Content quality and length matter significantly; posts exceeding 3,000 words earn roughly three times more traffic than shorter ones.

- Both too few and too many keywords hurt rankings; natural, contextual keyword use is what Google rewards.

- Backlinks remain critical, but quality trumps quantity; spammy or over-optimized anchor text can trigger ranking penalties.

- Refreshing old content can increase traffic by up to 106%, and slow page speed directly damages search rankings.

You Have Duplicate Content Issues

It’s entirely possible to have lingering duplicate content, even if you’re not intentionally posting the same content on multiple pages. If your site generates URLs dynamically or appends session data to URLs, the different URLs for each session loading the same page will read as duplicate content. Canonicalization can fix this issue, and it remains one of the most overlooked technical SEO problems in 2026.

Your Content is Poorly Written

It’s good to fill your site with content, but that content needs to be decent. You could have 10,000 pages, but if they all read as though they were scraped, spun, or churned out without any real thought or expertise, you’re not going to see much benefit. Google’s helpful content systems have matured significantly, and thin or unhelpful content is actively suppressed. In 2026, Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T) matter more than ever.

Your Content Isn’t Long Enough

Short blog posts can work, but data consistently shows that longer, more comprehensive content earns more traffic. Content with 3,000 or more words gets roughly three times more traffic than shorter posts, according to Semrush research. If your posts are consistently clocking in under 800 words without a very good reason, you may be leaving serious ranking potential on the table.

You Have No Keyword Focus

Here’s one question to ask: if you want to rank for a given query, are the words of that query actually in your content? It’s surprisingly common for a site to want to rank for a niche but fail to use niche-related keywords in their content. You might be ranking perfectly fine for whatever your content does include - just not the subjects you actually want to rank for. Also worth noting: nearly 74% of keywords have 10 or fewer searches per month, so targeting the wrong low-volume terms can leave you spinning your wheels.

You Have Too Much Keyword Focus

Having too much of a keyword focus is just as bad as too little. It’s called keyword stuffing, and it happens when you’re paranoid about not using a keyword often enough to rank. Suffice it to say that natural, contextual use of a keyword is what Google rewards in 2026. Over-optimization sends up red flags, not ranking signals.

Your H1 Tags Are Too Long

This one surprises a lot of people. Blog posts with seven or fewer words in their H1 tag obtain around 36% higher organic traffic on average than posts with fourteen or more words. Keep your headings tight, descriptive, and to the point. A bloated H1 dilutes your focus and may hurt your click-through rate in search results as well.

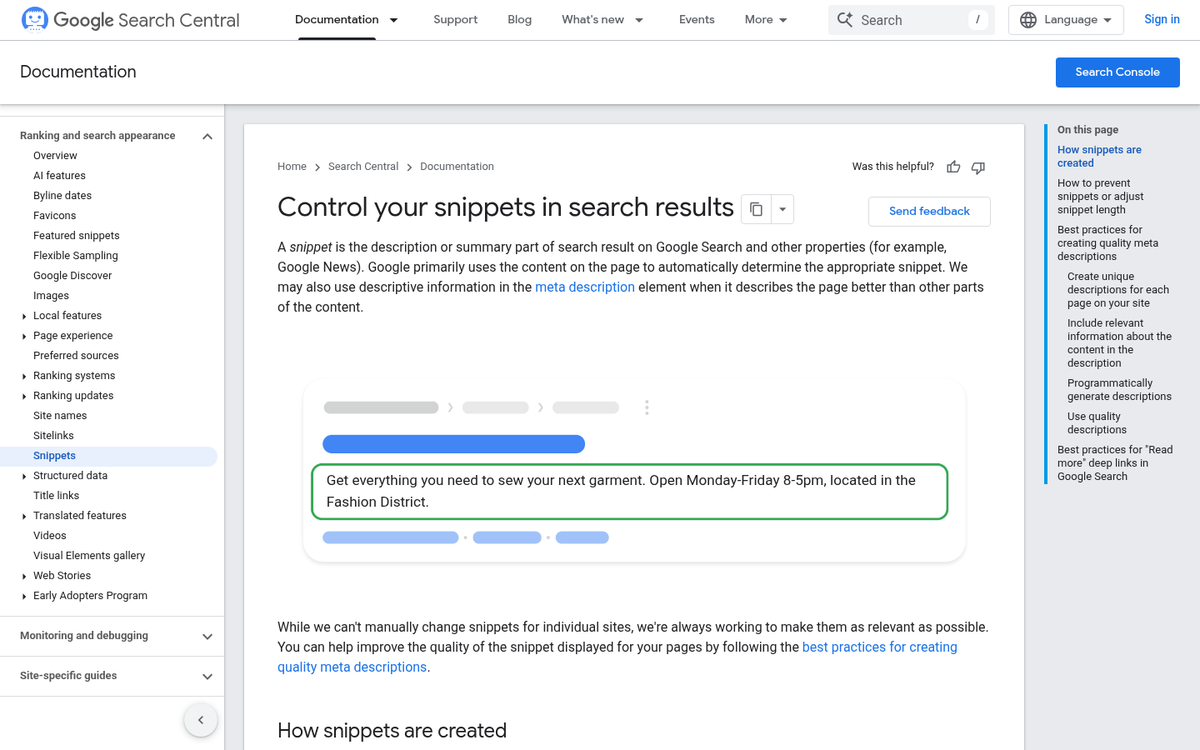

You Don’t Optimize Meta Data

You can rank without optimized meta data, but you’re doing yourself a disservice. A keyword-inclusive title tag and a well-crafted meta description give users a clear reason to click through. In a landscape dominated by zero-click searches, your meta data is often the only shot you have at earning a visit. Don’t waste it with generic or auto-generated text.

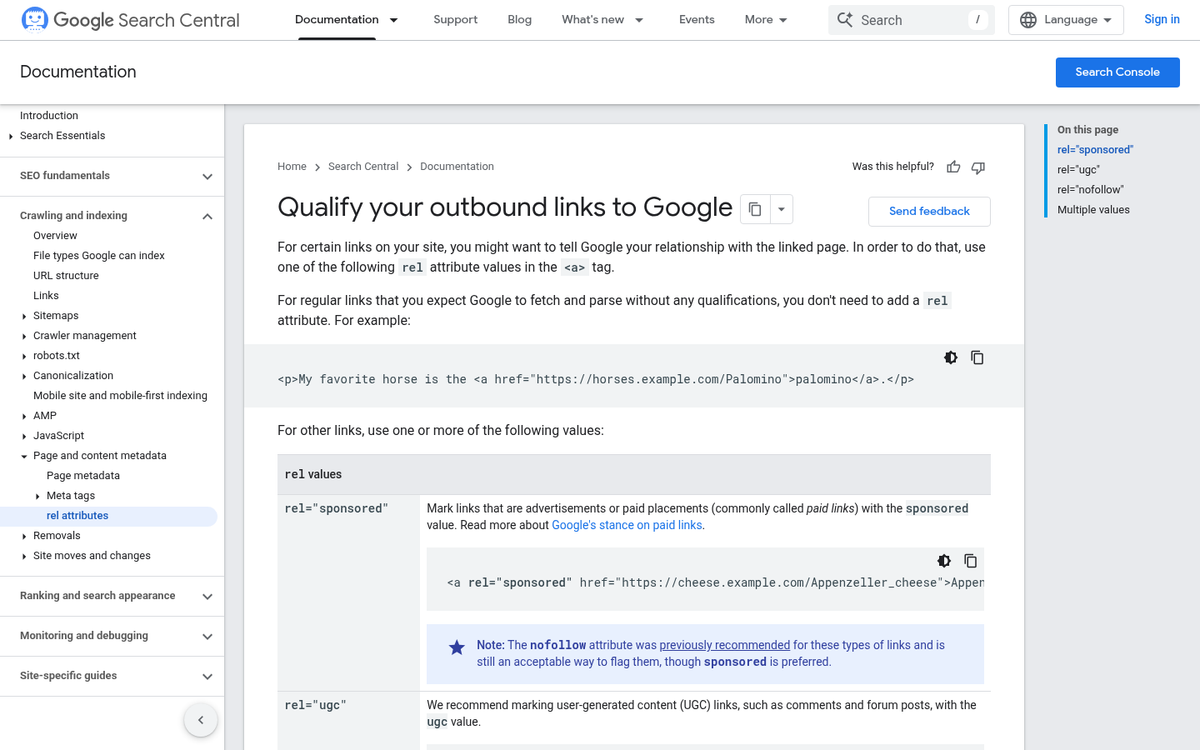

Your Comments are Riddled with Spam

Yes, comments aren’t content you directly control. You have to trust a spam filter or manually approve or moderate every comment posted on your site. For a larger, active site, this can be an incredible burden. Still, if you let your comments end up filled with spam, you’re going to suffer for it - both in terms of user trust and crawl quality.

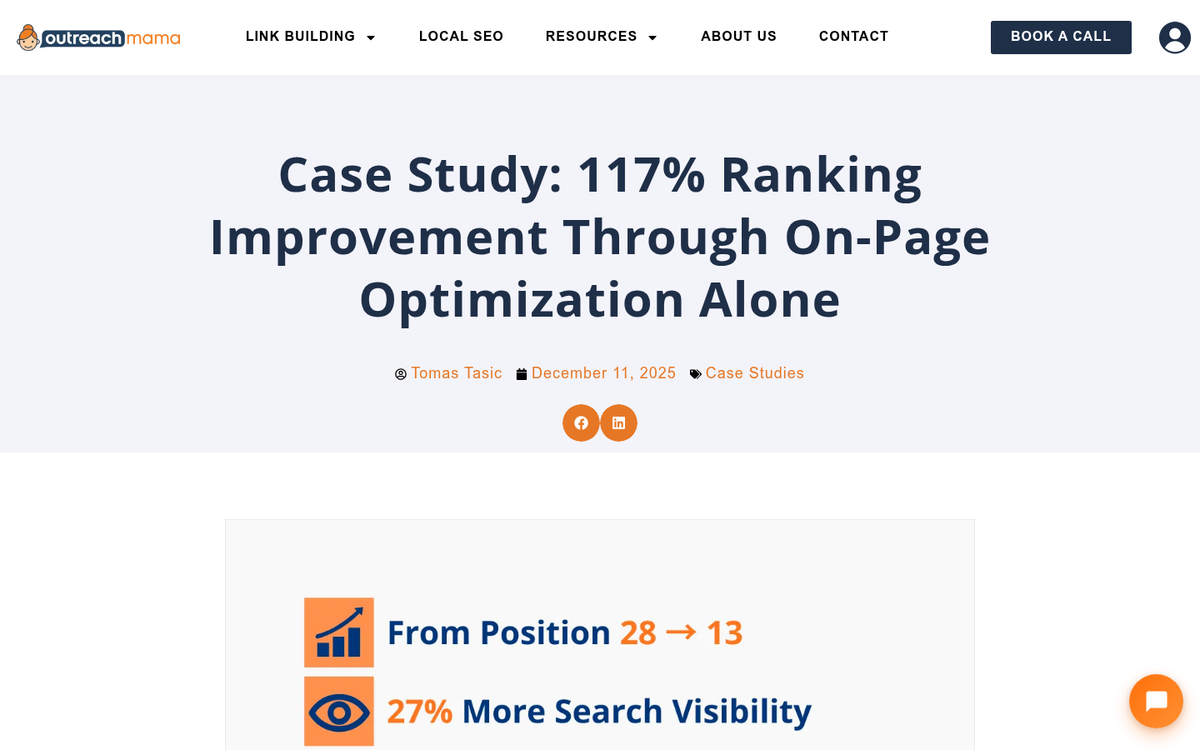

You Have No Backlinks

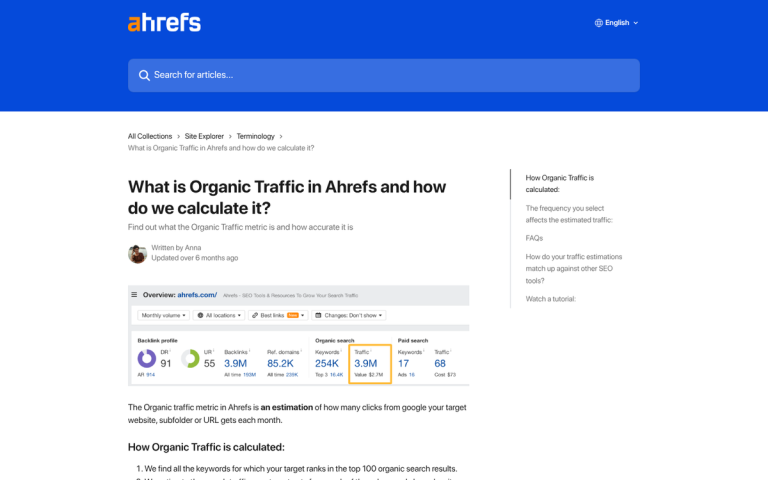

Links remain one of Google’s most important ranking signals. According to Ahrefs data, more than 66% of pages on the web have zero backlinks pointing to them - and the vast majority of those pages rank for nothing. If your site doesn’t have any incoming links, or has too few quality ones, you’re going to struggle to find a decent place in the rankings regardless of how good your content is. One approach worth exploring is turning your existing website traffic into new backlinks, and you might also consider whether Web 2.0 sites are still effective for link building as part of your overall strategy.

You Have Too Many Spam Backlinks

Link quality matters just as much as quantity. Google is sophisticated enough to recognize links coming from low-quality, spammy, or irrelevant sites - and those links can actively hurt you. One strong, relevant backlink from a credible source is worth far more than hundreds of links from dubious directories or link farms.

Your Incoming Links are Overly Optimized

Anchor text diversity matters. If all of your incoming links use the exact same keyword-rich anchor text, it’s a signal that something unnatural is going on. Google’s systems are tuned to detect manipulative link patterns, and over-optimized anchor text can earn you a manual or algorithmic penalty.

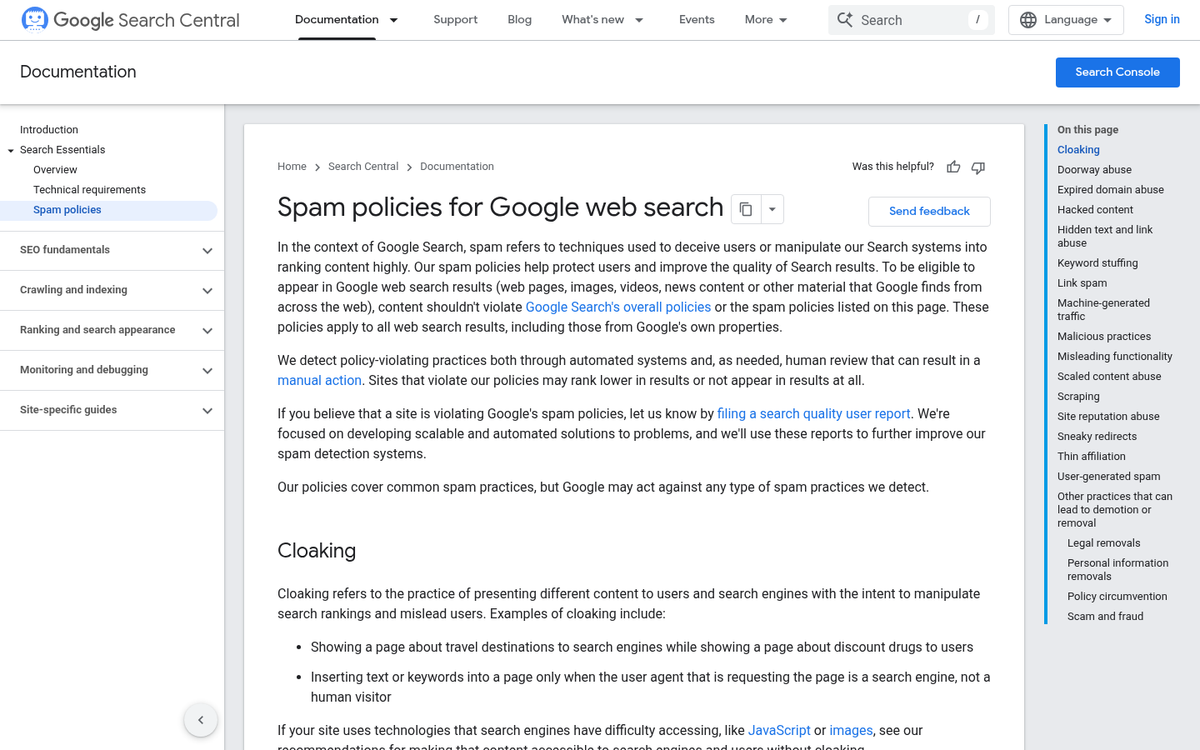

You Have Hidden Links on Pages

Hidden links and hidden content may seem clever, but Google has been onto this trick for decades. White text on a white background, links hidden behind images, content shoved off-screen with CSS - these tactics are not just ineffective, they’re actively penalized. If you want to use links effectively, learn how to properly cloak your affiliate links instead. If you wouldn’t show it to your users, don’t try to show it to Google.

You Built Links Too Quickly

Rome wasn’t built in a day, and neither is a trustworthy backlink profile. If your site suddenly accumulates hundreds or thousands of links in a short period, Google is going to take a close look at why. Unnatural spikes in link acquisition are a classic signal of paid or manipulated links, and the consequences can be severe.

You Aren’t Linking Out

The web is collaborative. Linking out to relevant, high-quality external sources signals to Google that you’re engaged with your topic and willing to point users toward helpful information. Hoarding PageRank by never linking out is an outdated strategy that can actually make your content look less trustworthy, not more.

Your Old Content Has Gone Stale

This is one of the most underrated reasons blogs lose rankings over time. Approximately 60% of pages ranking on Google’s first page are three or more years old - but those pages have typically been updated and maintained. If your posts were written years ago and haven’t been touched since, they may be losing ground to fresher, more accurate content. HubSpot found that refreshing old blog posts can increase traffic by as much as 106%. Dusting off your archives isn’t just a good idea - it’s a genuine growth strategy.

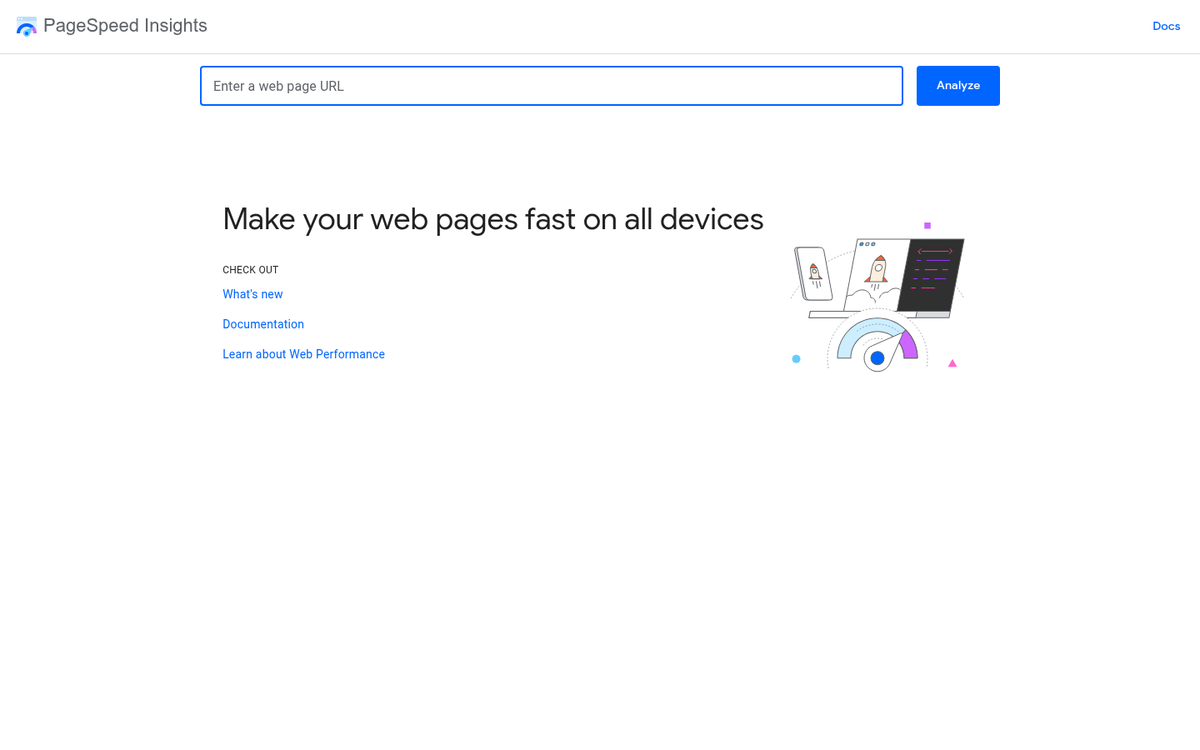

Your Site Loads Too Slowly

Page speed has been a confirmed Google ranking factor for years, and in 2026, Core Web Vitals are a central part of how Google evaluates page experience. Slow load times frustrate users and inflate your bounce rate. If your site is sluggish on mobile - where the majority of searches now happen - you’re leaving rankings on the table. Tools like Google PageSpeed Insights and Lighthouse can help you identify exactly what’s holding you back.

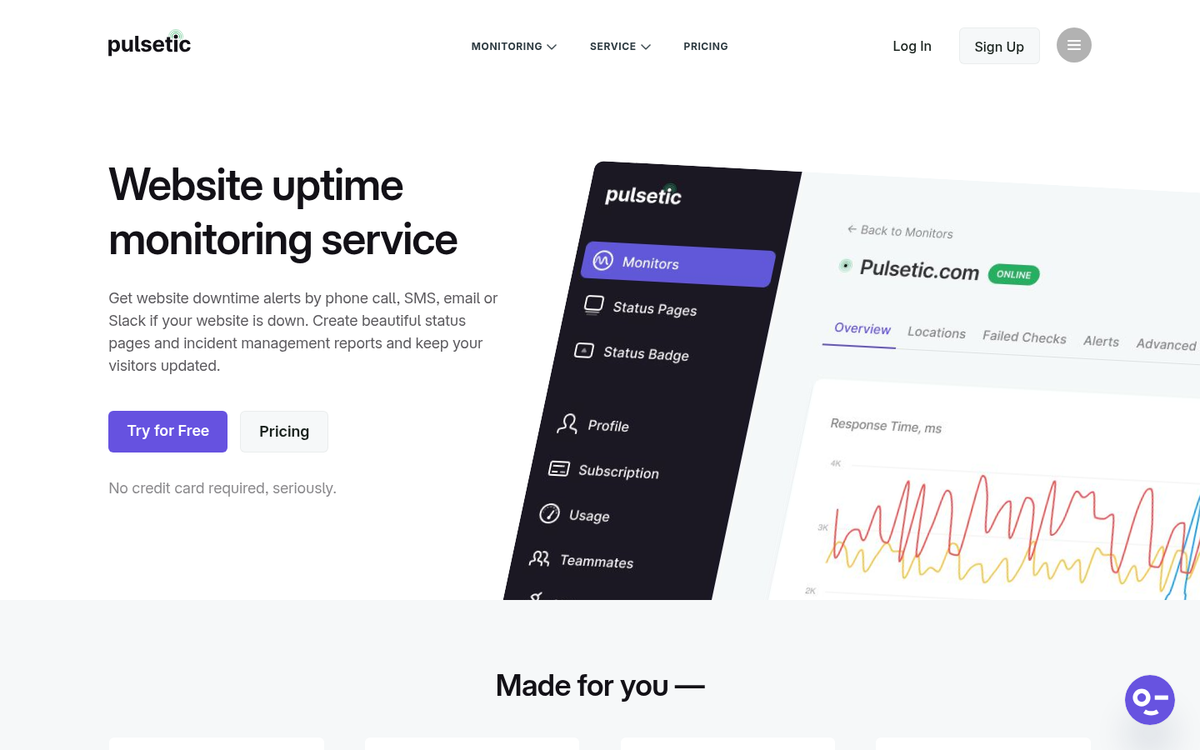

Your Site Goes Down

If your site has frequent downtime, it’s worse than being slow to load. If Google’s crawlers repeatedly can’t reach your pages, your site’s crawl priority drops. There are no business hours on the web, and your hosting reliability directly affects your SEO. Monitor your uptime and invest in hosting that can handle your traffic without flinching.